|

Jetson Inference

DNN Vision Library

|

|

Jetson Inference

DNN Vision Library

|

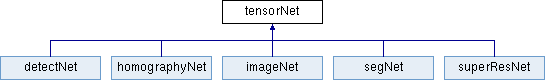

Abstract class for loading a tensor network with TensorRT. More...

#include <tensorNet.h>

Classes | |

| class | Logger |

| Logger class for GIE info/warning/errors. More... | |

| struct | outputLayer |

| class | Profiler |

| Profiler interface for measuring layer timings. More... | |

Public Member Functions | |

| virtual | ~tensorNet () |

| Destory. More... | |

| bool | LoadNetwork (const char *prototxt, const char *model, const char *mean=NULL, const char *input_blob="data", const char *output_blob="prob", uint32_t maxBatchSize=DEFAULT_MAX_BATCH_SIZE, precisionType precision=TYPE_FASTEST, deviceType device=DEVICE_GPU, bool allowGPUFallback=true, nvinfer1::IInt8Calibrator *calibrator=NULL, cudaStream_t stream=NULL) |

| Load a new network instance. More... | |

| bool | LoadNetwork (const char *prototxt, const char *model, const char *mean, const char *input_blob, const std::vector< std::string > &output_blobs, uint32_t maxBatchSize=DEFAULT_MAX_BATCH_SIZE, precisionType precision=TYPE_FASTEST, deviceType device=DEVICE_GPU, bool allowGPUFallback=true, nvinfer1::IInt8Calibrator *calibrator=NULL, cudaStream_t stream=NULL) |

| Load a new network instance with multiple output layers. More... | |

| bool | LoadNetwork (const char *prototxt, const char *model, const char *mean, const char *input_blob, const Dims3 &input_dims, const std::vector< std::string > &output_blobs, uint32_t maxBatchSize=DEFAULT_MAX_BATCH_SIZE, precisionType precision=TYPE_FASTEST, deviceType device=DEVICE_GPU, bool allowGPUFallback=true, nvinfer1::IInt8Calibrator *calibrator=NULL, cudaStream_t stream=NULL) |

| Load a new network instance (this variant is used for UFF models) More... | |

| void | EnableLayerProfiler () |

| Manually enable layer profiling times. More... | |

| void | EnableDebug () |

| Manually enable debug messages and synchronization. More... | |

| bool | AllowGPUFallback () const |

| Return true if GPU fallback is enabled. More... | |

| deviceType | GetDevice () const |

| Retrieve the device being used for execution. More... | |

| precisionType | GetPrecision () const |

| Retrieve the type of precision being used. More... | |

| bool | IsPrecision (precisionType type) const |

| Check if a particular precision is being used. More... | |

| cudaStream_t | GetStream () const |

| Retrieve the stream that the device is operating on. More... | |

| cudaStream_t | CreateStream (bool nonBlocking=true) |

| Create and use a new stream for execution. More... | |

| void | SetStream (cudaStream_t stream) |

| Set the stream that the device is operating on. More... | |

| const char * | GetPrototxtPath () const |

| Retrieve the path to the network prototxt file. More... | |

| const char * | GetModelPath () const |

| Retrieve the path to the network model file. More... | |

| modelType | GetModelType () const |

| Retrieve the format of the network model. More... | |

| bool | IsModelType (modelType type) const |

| Return true if the model is of the specified format. More... | |

| float | GetNetworkTime () |

| Retrieve the network runtime (in milliseconds). More... | |

| float2 | GetProfilerTime (profilerQuery query) |

| Retrieve the profiler runtime (in milliseconds). More... | |

| float | GetProfilerTime (profilerQuery query, profilerDevice device) |

| Retrieve the profiler runtime (in milliseconds). More... | |

| void | PrintProfilerTimes () |

| Print the profiler times (in millseconds). More... | |

Static Public Member Functions | |

| static precisionType | FindFastestPrecision (deviceType device=DEVICE_GPU, bool allowInt8=true) |

| Determine the fastest native precision on a device. More... | |

| static std::vector< precisionType > | DetectNativePrecisions (deviceType device=DEVICE_GPU) |

| Detect the precisions supported natively on a device. More... | |

| static bool | DetectNativePrecision (const std::vector< precisionType > &nativeTypes, precisionType type) |

| Detect if a particular precision is supported natively. More... | |

| static bool | DetectNativePrecision (precisionType precision, deviceType device=DEVICE_GPU) |

| Detect if a particular precision is supported natively. More... | |

Protected Member Functions | |

| tensorNet () | |

| Constructor. More... | |

| bool | ProfileModel (const std::string &deployFile, const std::string &modelFile, const char *input, const Dims3 &inputDims, const std::vector< std::string > &outputs, uint32_t maxBatchSize, precisionType precision, deviceType device, bool allowGPUFallback, nvinfer1::IInt8Calibrator *calibrator, std::ostream &modelStream) |

| Create and output an optimized network model. More... | |

| void | PROFILER_BEGIN (profilerQuery query) |

| Begin a profiling query, before network is run. More... | |

| void | PROFILER_END (profilerQuery query) |

| End a profiling query, after the network is run. More... | |

| bool | PROFILER_QUERY (profilerQuery query) |

| Query the CUDA part of a profiler query. More... | |

Protected Attributes | |

| tensorNet::Logger | gLogger |

| tensorNet::Profiler | gProfiler |

| std::string | mPrototxtPath |

| std::string | mModelPath |

| std::string | mMeanPath |

| std::string | mInputBlobName |

| std::string | mCacheEnginePath |

| std::string | mCacheCalibrationPath |

| deviceType | mDevice |

| precisionType | mPrecision |

| modelType | mModelType |

| cudaStream_t | mStream |

| cudaEvent_t | mEventsGPU [PROFILER_TOTAL *2] |

| timespec | mEventsCPU [PROFILER_TOTAL *2] |

| nvinfer1::IRuntime * | mInfer |

| nvinfer1::ICudaEngine * | mEngine |

| nvinfer1::IExecutionContext * | mContext |

| uint32_t | mWidth |

| uint32_t | mHeight |

| uint32_t | mInputSize |

| float * | mInputCPU |

| float * | mInputCUDA |

| float2 | mProfilerTimes [PROFILER_TOTAL+1] |

| uint32_t | mProfilerQueriesUsed |

| uint32_t | mProfilerQueriesDone |

| uint32_t | mMaxBatchSize |

| bool | mEnableProfiler |

| bool | mEnableDebug |

| bool | mAllowGPUFallback |

| Dims3 | mInputDims |

| std::vector< outputLayer > | mOutputs |

Abstract class for loading a tensor network with TensorRT.

For example implementations,

|

virtual |

Destory.

|

protected |

Constructor.

|

inline |

Return true if GPU fallback is enabled.

| cudaStream_t tensorNet::CreateStream | ( | bool | nonBlocking = true | ) |

Create and use a new stream for execution.

|

static |

Detect if a particular precision is supported natively.

|

static |

Detect if a particular precision is supported natively.

|

static |

Detect the precisions supported natively on a device.

| void tensorNet::EnableDebug | ( | ) |

Manually enable debug messages and synchronization.

| void tensorNet::EnableLayerProfiler | ( | ) |

Manually enable layer profiling times.

|

static |

Determine the fastest native precision on a device.

|

inline |

Retrieve the device being used for execution.

|

inline |

Retrieve the path to the network model file.

|

inline |

Retrieve the format of the network model.

|

inline |

Retrieve the network runtime (in milliseconds).

|

inline |

Retrieve the type of precision being used.

|

inline |

Retrieve the profiler runtime (in milliseconds).

|

inline |

Retrieve the profiler runtime (in milliseconds).

|

inline |

Retrieve the path to the network prototxt file.

|

inline |

Retrieve the stream that the device is operating on.

|

inline |

Return true if the model is of the specified format.

|

inline |

Check if a particular precision is being used.

| bool tensorNet::LoadNetwork | ( | const char * | prototxt, |

| const char * | model, | ||

| const char * | mean = NULL, |

||

| const char * | input_blob = "data", |

||

| const char * | output_blob = "prob", |

||

| uint32_t | maxBatchSize = DEFAULT_MAX_BATCH_SIZE, |

||

| precisionType | precision = TYPE_FASTEST, |

||

| deviceType | device = DEVICE_GPU, |

||

| bool | allowGPUFallback = true, |

||

| nvinfer1::IInt8Calibrator * | calibrator = NULL, |

||

| cudaStream_t | stream = NULL |

||

| ) |

Load a new network instance.

| prototxt | File path to the deployable network prototxt |

| model | File path to the caffemodel |

| mean | File path to the mean value binary proto (NULL if none) |

| input_blob | The name of the input blob data to the network. |

| output_blob | The name of the output blob data from the network. |

| maxBatchSize | The maximum batch size that the network will be optimized for. |

| bool tensorNet::LoadNetwork | ( | const char * | prototxt, |

| const char * | model, | ||

| const char * | mean, | ||

| const char * | input_blob, | ||

| const std::vector< std::string > & | output_blobs, | ||

| uint32_t | maxBatchSize = DEFAULT_MAX_BATCH_SIZE, |

||

| precisionType | precision = TYPE_FASTEST, |

||

| deviceType | device = DEVICE_GPU, |

||

| bool | allowGPUFallback = true, |

||

| nvinfer1::IInt8Calibrator * | calibrator = NULL, |

||

| cudaStream_t | stream = NULL |

||

| ) |

Load a new network instance with multiple output layers.

| prototxt | File path to the deployable network prototxt |

| model | File path to the caffemodel |

| mean | File path to the mean value binary proto (NULL if none) |

| input_blob | The name of the input blob data to the network. |

| output_blobs | List of names of the output blobs from the network. |

| maxBatchSize | The maximum batch size that the network will be optimized for. |

| bool tensorNet::LoadNetwork | ( | const char * | prototxt, |

| const char * | model, | ||

| const char * | mean, | ||

| const char * | input_blob, | ||

| const Dims3 & | input_dims, | ||

| const std::vector< std::string > & | output_blobs, | ||

| uint32_t | maxBatchSize = DEFAULT_MAX_BATCH_SIZE, |

||

| precisionType | precision = TYPE_FASTEST, |

||

| deviceType | device = DEVICE_GPU, |

||

| bool | allowGPUFallback = true, |

||

| nvinfer1::IInt8Calibrator * | calibrator = NULL, |

||

| cudaStream_t | stream = NULL |

||

| ) |

Load a new network instance (this variant is used for UFF models)

| prototxt | File path to the deployable network prototxt |

| model | File path to the caffemodel |

| mean | File path to the mean value binary proto (NULL if none) |

| input_blob | The name of the input blob data to the network. |

| input_dims | The dimensions of the input blob (used for UFF). |

| output_blobs | List of names of the output blobs from the network. |

| maxBatchSize | The maximum batch size that the network will be optimized for. |

|

inline |

Print the profiler times (in millseconds).

|

protected |

Create and output an optimized network model.

| deployFile | name for network prototxt |

| modelFile | name for model |

| outputs | network outputs |

| maxBatchSize | maximum batch size |

| modelStream | output model stream |

|

inlineprotected |

Begin a profiling query, before network is run.

|

inlineprotected |

End a profiling query, after the network is run.

|

inlineprotected |

Query the CUDA part of a profiler query.

| void tensorNet::SetStream | ( | cudaStream_t | stream | ) |

Set the stream that the device is operating on.

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |