Media Source Extensions v0.5

Draft Proposal

- Editors:

- Aaron Colwell, Google, Inc.

- Adrian Bateman, Microsoft Corporation

- Mark Watson, Netflix, Inc.

Copyright © 2012 W3C® (MIT, ERCIM, Keio), All Rights Reserved. W3C liability, trademark and document use rules apply.

This section describes the status of this document at the time of its publication. Other documents may supersede this document. A list of current W3C publications and the latest revision of this technical report can be found in the W3C technical reports index at http://www.w3.org/TR/.

This document was submitted to the HTML Working Group as an Unofficial Draft. If you wish to make comments regarding this document, please send them to public-html@w3.org (subscribe, archives). You may send feedback to public-html-comments@w3.org (subscribe, archives) without joining the working group. All feedback is welcome.

Publication as a Unofficial Draft does not imply endorsement by the W3C Membership. This is a draft document and may be updated, replaced or obsoleted by other documents at any time. It is inappropriate to cite this document as other than work in progress.

This document was produced by a group operating under the 5 February 2004 W3C Patent Policy. W3C maintains a public list of any patent disclosures made in connection with the deliverables of the group; that page also includes instructions for disclosing a patent. An individual who has actual knowledge of a patent which the individual believes contains Essential Claim(s) must disclose the information in accordance with section 6 of the W3C Patent Policy.

This proposal extends HTMLMediaElement to allow JavaScript to generate media streams for playback. Allowing JavaScript to generate streams facilitates a variety of use cases like adaptive streaming and time shifting live streams.

This proposal allows JavaScript to dynamically construct media streams for <audio> and <video>.

It defines extensions to HTMLMediaElement that allow JavaScript to pass media segments to the user agent.

A buffering model is also included to describe how the user agent should act when different media segments are

appended at different times. Byte stream specifications for WebM & ISO Base Media File Format are given to specify the

expected format of media segments used with these extensions.

This proposal was designed with the following goals in mind:

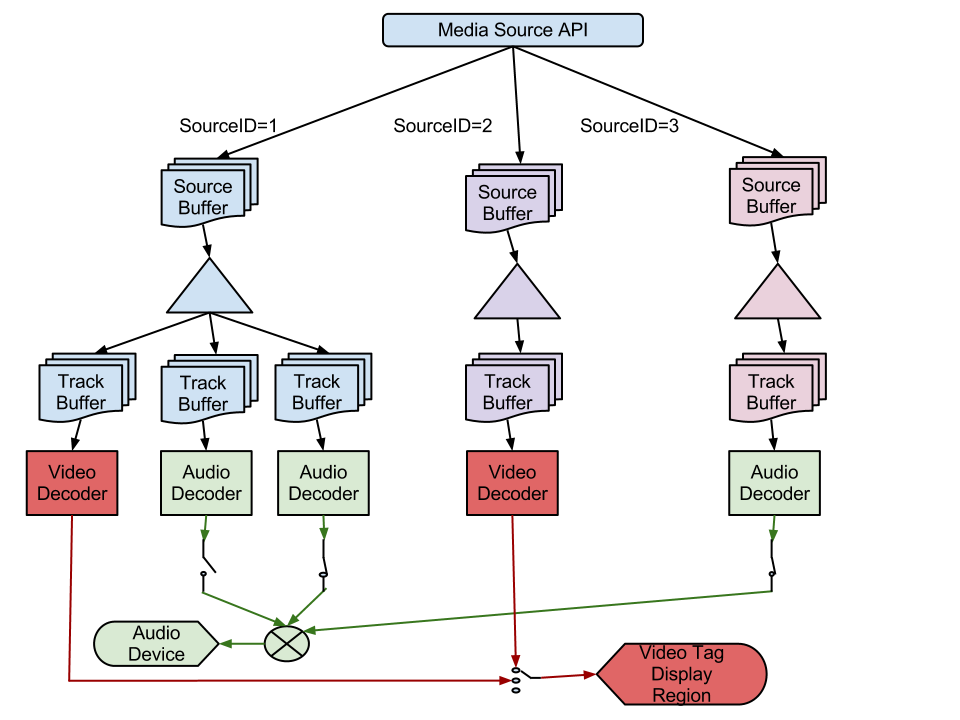

The set of source IDs that are providing the selected video track and the enabled audio tracks.

An ID registered with sourceAddId() that identifies a distinct sequence of initialization segments & media segments appended to a specific source buffer.

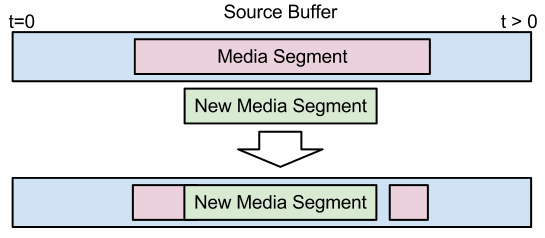

A hypothetical buffer that contains the media segments for a particular source ID. When media segments are passed to sourceAppend() they update the state of this buffer. The source buffer only allows a single media segment to cover a specific point in the presentation timeline of each track. If a media segment gets appended that contains media data overlapping (in presentation time) with media data from an existing segment, then the new media data will override the old media data. Since media segments depend on initialization segments the source buffer is also responsible for maintaining these associations. During playback, the media element pulls segment data out of the source buffers, demultiplexes it if necessary, and enqueues it into track buffers so it will get decoded and displayed. sourceBuffered() describes the time ranges that are covered by media segments in the source buffer.

A hypothetical buffer that represents initialization and media data for a single AudioTrack or VideoTrack that has been queued for playback. This buffer may not exist in actual implementations, but it is intended to represent media data that will be decoded no matter what media segments are appended to update the source buffer. This distinction is important when considering appends that happen close to the current playback position. Details about transfers between the source buffer and track buffers are given here.

A sequence of bytes that contains all of the initialization information required to decode a sequence of media segments. This includes codec initialization data, trackID mappings for multiplexed segments, and timestamp offsets (e.g. edit lists).

Container specific examples of initialization segments:

A sequence of bytes that contain packetized & timestamped media data for a portion of the presentation timeline. Media segments are always associated with the most recently appended initialization segment.

Container specific examples of media segments:

A position in a media segment where decoding and continuous playback can begin without relying on any previous data in the segment. For video this tends to be the location of I-frames. In the case of audio, most audio frames can be treated as a random access point. Since video tracks tend to have a more sparse distribution of random access points, the location of these points are usually considered the random access points for multiplexed streams.

The subsections below outline the buffering model for this proposal. It describes how to add and remove source buffers from the presentation and describes the various rules and behaviors associated with appending data to an individual source buffer. At the highest level, the web application simply creates source buffers and appends a sequence of initialization segments and media segments to update the buffer's state. The media element pulls media data out of the source buffers, plays it, and fires events just like it would if a normal URL was passed to the src attribute. The web application is expected to monitor media element events to determine when it needs to append more media segments.

Creating a new source buffer is initiated by calling sourceAddId(). This method allows the web application to specify the source ID it wants to associate with a new source buffer and indicates the format of the data it intends to append. The user agent looks at the type information and determines whether it supports the desired format and has sufficient resources to handle a new source buffer for this device. If the user agent can't support another source ID then it will throw an appropriate exception to signal why the request couldn't be satisfied.

Updating the state of a source buffer requires appending at least one initialization segment and one or more media segments via sourceAppend(). The following list outlines some of the basic rules for appending segments.

sourceAbort() is called.sourceAppend()).To simplify the implementation and facilitate interoperability, a few constraints are placed on the initialization segments that are appended to a specific source ID:

To simplify the implementation and facilitate interoperability, a few constraints are placed on the media segments that are appended to a specific source ID:

sourceBuffered().Once a new source ID has been added, the source buffer expects an initialization segment to be appended first. This first segment indicates the number and type of streams contained in the media segments that follow. This allows the media element to configure the necessary decoders and output devices. This first segment can also cause a readyState transition to HAVE_METADATA if this is the first source ID registered, or if it is the first track of a specific type (i.e. first audio or first video track). If neither of the conditions hold then the tracks for this new source ID will just appear as disabled tracks and won't affect the current readyState until they are selected. The media element will also add the appropriate tracks to the audioTracks & videoTracks collections and fire the necessary change events. The description for sourceAppend() contains all the details.

If a media segment is appended to a time range that is not covered by existing segments in the source buffer, then its data is copied directly into the source buffer. Addition of this data may trigger readyState transitions depending on what other data is buffered and whether the media element has determined if it can start playback. Calls to sourceBuffered() will always reflect the current TimeRanges buffered in the source buffer.

There are several ways that media segments can overlap segments in the source buffer. Behavior for the different overlap situations are described below. If more than one overlap applies, then the start overlap gets resolved first, followed by any complete overlaps, and finally the end overlap. If a segment contains multiple tracks then the overlap is resolved independently for each track.

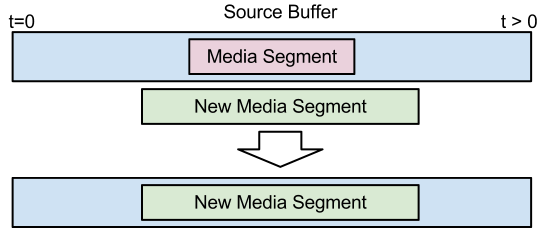

The figure above shows how the source buffer gets updated when a new media segment completely overlaps a segment in the buffer. In this case, the new segment completely replaces the old segment.

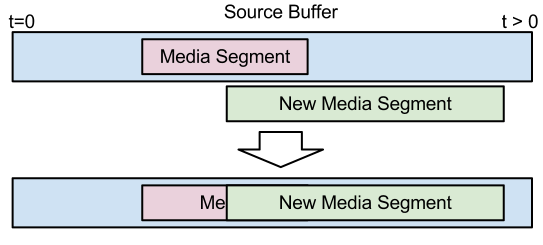

The figure above shows how the source buffer gets updated when the beginning of a new media segment overlaps a segment in the buffer. In this case the new segment replaces all the old media data in the overlapping region. Since media segments are constrained to starting with random access points, this provides a seamless transition between segments.

The one case that requires special attention is where an audio frame overlaps with the start of the new media segment. The base level behavior that MUST be supported requires dropping the old audio frame that overlaps the start of the new segment and inserting silence for the small gap that is created. A higher quality implementation could support outputting a portion of the old segment and all of the new segment or crossfade during the overlapping region. This is a quality of implementation issue. The key property here though is the small silence gap should not be reflected in the ranges reported by sourceBuffered()

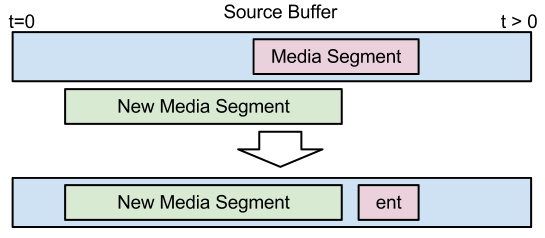

The figure above shows how the source buffer gets updated when the end of a new media segment overlaps a segment in the buffer. In this case, the media element tries to keep as much of the old segment as possible. The amount saved depends on where the closest random access point, in the old segment, is to the end of the new segment. In the case of audio, if the gap is smaller than the size of an audio frame, then the media element should insert silence for this gap and not reflect it in sourceBuffered().

An implementation may keep old segment data before the end of the new segment to avoid creating a gap if it wishes. Doing this though can significantly increase implementation complexity and could cause delays at the splice point. The key property that must be preserved is the entirety of the new segment gets added to the source buffer and it is up to the implementation how much of the old segment data is retained. The web application can use sourceBuffered() to determine how much of the old segment was preserved.

The figure above shows how the source buffer gets updated when the new media segment is in the middle of the old segment. This condition is handled by first resolving the start overlap and then resolving the end overlap.

The source buffer represents the media that the web application would like the media element to play. The track buffer contains the data that will actually get decoded and rendered. In most cases the track buffer will simply contain a subset of the source buffer near the current playback position. These two buffers start to diverge though when media segments that overlay or are very close to the current playback position are appended. Depending on the contents of the new media segment it may not be possible to switch to the new data immediately because there isn't a random access point close enough to the current playback position. The quality of the implementation determines how much data is considered "in the track buffer". It should transfer data to the track buffer as late as possible whilst maintaining seamless playback. Some implementations may be able to instantiate multiple decoders or decode the new data significantly faster than real-time to achieve a seamless splice immediately. Other implementations may delay until the next random access point before switching to the newly appended data. Notice that this difference in behavior is only observable when appending close to the current playback position. The track buffer represents a media subsegment, like a group of pictures or something with similar decode dependencies, that the media element commits to playing. This commitment may be influenced by a variety of things like limited decoding resources, hardware decode buffers, a jitter buffer, or the desire to limit implementation complexity.

Here is an example to help clarify the role of the track buffer. Say the current playback position has a timestamp of 8 and the media element pulled frames with timestamp 9 & 10 into the track buffer. The web application then appends a higher quality media segment that starts with a random access point at timestamp 9. The source buffer will get updated with the higher quality data, but the media element won't be able to switch to this higher quality data until the next random access point at timestamp 20. This is because a frame for timestamp 9 is already in the track buffer. As you can see the track buffer represents the "point of no return." for decoding. If a seek occurs the media element may choose to use the higher quality data since a seek might imply flushing the track buffer and the user expects a break in playback.

When a new media segment is appended, memory constraints may cause previously appended segments to get evicted from the source buffer. The eviction algorithm is implementation dependent, but segments that aren't likely to be needed soon are the most likely to get evicted. The sourceBuffered() method allows the web application to monitor what time ranges are currently buffered in the source buffer.

Removing a source ID with sourceRemoveId() releases all resources associated with the ID. This includes destroying the source buffer, track buffers, and decoders. The media element will also remove the appropriate tracks from audioTracks & videoTracks and fire the necessary change events. Playback may become degraded or stop if the currently selected VideoTrack or the only enabled AudioTracks are removed.

We extend HTML media elements to allow media data to be streamed into them from JavaScript.

partial interface HTMLMediaElement {

// URL passed to src attribute to enable the media source logic.

readonly attribute DOMString mediaSourceURL;

// Manages IDs for appending media to the source.

void sourceAddId(DOMString id, DOMString type);

void sourceRemoveId(DOMString id);

// Returns the time ranges buffered for a specific ID.

TimeRanges sourceBuffered(DOMString id);

// Append segment data.

void sourceAppend(DOMString id, Uint8Array data);

// Abort the current segment.

void sourceAbort(DOMString id);

// end of stream status codes.

const unsigned short EOS_NO_ERROR = 0;

const unsigned short EOS_NETWORK_ERR = 1;

const unsigned short EOS_DECODE_ERR = 2;

void sourceEndOfStream(unsigned short status);

// states

const unsigned short SOURCE_CLOSED = 0;

const unsigned short SOURCE_OPEN = 1;

const unsigned short SOURCE_ENDED = 2;

readonly attribute unsigned short sourceState;

[TreatNonCallableAsNull] attribute Function? onsourceopen;

[TreatNonCallableAsNull] attribute Function? onsourceended;

[TreatNonCallableAsNull] attribute Function? onsourceclose;

};

The mediaSourceURL attribute returns the URL used to enable the Media Source extension methods. To enable the Media Source extensions on a media element, assign this URL to the src attribute. The format of the URL is browser specific and may be unique for each HTMLMediaElement. The mediaSourceURL on one HTMLMediaElement should not be assigned to the src attribute on a different HTMLMediaElement.

Example mediaSourceURL:

x-media-source:e183f43d-c8a3-4905-bf89-e8e920041c7c

Note the browser specific scheme and use of a UUID for the path to make it unique.

mediaSourceURL is one approach to switching the media element into "media source" mode. Alternative approaches should be explored to improve consistency with other APIs and provide a declarative mechanism for enabling "media source" mode.The sourceAddId(id, type) method must run the following steps:

INVALID_ACCESS_ERR exception and abort these steps.INVALID_STATE_ERR exception and abort these steps.INVALID_ACCESS_ERR exception and abort these steps.NOT_SUPPORTED_ERR exception and abort these steps.QUOTA_EXCEEDED_ERR exception and abort these steps.sourceState attribute is not in the SOURCE_OPEN state then throw an INVALID_STATE_ERR exception and abort these steps.The sourceRemoveId(id) method must run the following steps:

INVALID_ACCESS_ERR exception and abort these steps.sourceState attribute is in the SOURCE_CLOSED state then throw an INVALID_STATE_ERR exception and abort these steps.audioTracks and videoTracks for all tracks associated with id and fire a simple event named change on the modified lists.The sourceBuffered(id) method must run the following steps:

INVALID_ACCESS_ERR exception and abort these steps.sourceAddId() then throw a SYNTAX_ERR exception and abort these steps.sourceState attribute is in the SOURCE_CLOSED state then throw an INVALID_STATE_ERR exception and abort these steps.TimeRanges for the source buffer associated with id.The sourceAppend(id, data) method must run the following steps:

INVALID_ACCESS_ERR exception and abort these steps.sourceAddId() then throw a SYNTAX_ERR exception and abort these steps.INVALID_ACCESS_ERR exception and abort these steps.sourceState attribute is not in the SOURCE_OPEN state then throw an INVALID_STATE_ERR exception and abort these steps.readyState attribute is HAVE_NOTHING:readyState attribute to HAVE_METADATA and fire the appropriate event for this transition.readyState attribute is greater than HAVE_CURRENT_DATA and the initialization segment contains the first video or first audio track in the presentation:readyState attribute to HAVE_METADATA and fire the appropriate event for this transition.

audioTracks

AudioTrack and mark it as enabled.AudioTrack for each audio track in the initialization segment.videoTracks:VideoTrack and mark it as selected.VideoTrack for each video track in the initialization segment.readyState attribute is HAVE_METADATA and data causes all active IDs to have media data for the current playback position.readyState attribute to HAVE_CURRENT_DATA and fire the appropriate event for this transition.readyState attribute is HAVE_CURRENT_DATA and data causes all active IDs to have media data beyond the current playback position.readyState attribute to HAVE_FUTURE_DATA and fire the appropriate event for this transition.readyState attribute is HAVE_FUTURE_DATA and data causes all active IDs to have enough data to start playback.readyState attribute to HAVE_ENOUGH_DATA and fire the appropriate event for this transition.The sourceAbort(id) method must run the following steps:

INVALID_ACCESS_ERR exception and abort these steps.sourceAddId() then throw a SYNTAX_ERR exception and abort these steps.sourceState attribute is not in the SOURCE_OPEN state then throw an INVALID_STATE_ERR exception and abort these steps.sourceBuffered() will reflect what data, if any, was kept.EOS_NO_ERROR (numeric value 0)EOS_NETWORK_ERR (numeric value 1)error attribute to be set to MediaError.MEDIA_ERR_NETWORK

EOS_DECODE_ERR (numeric value 2)error attribute to be set to MediaError.MEDIA_ERR_DECODE

The sourceEndOfStream(status) method must run the following steps:

sourceState attribute is not in the SOURCE_OPEN state then throw an INVALID_STATE_ERR exception and abort these steps.sourceState attribute value to SOURCE_ENDED.EOS_NO_ERROR

sourceAppend() has been played.EOS_NETWORK_ERR

EOS_DECODE_ERR

INVALID_ACCESS_ERR exception.The sourceState attribute represents the state of the source. It can have the following values:

SOURCE_CLOSED (numeric value 0)SOURCE_OPEN (numeric value 1)SOURCE_ENDED (numeric value 2)sourceEndOfStream() has been called on this source.When the media element is created sourceState must be set to SOURCE_CLOSED (0).

| Event handler | Event handler event type |

|---|---|

onsourceopen |

sourceopen |

onsourceended |

sourceended |

onsourceclose |

sourceclose |

| Event name | Interface | Dispatched when... |

|---|---|---|

sourceopen |

Event |

When the source transitions from SOURCE_CLOSED to SOURCE_OPEN or from SOURCE_ENDED to SOURCE_OPEN. |

sourceended |

Event |

When the source transitions from SOURCE_OPEN to SOURCE_ENDED. |

sourceclose |

Event |

When the source transitions from SOURCE_OPEN or SOURCE_ENDED to SOURCE_CLOSED. |

src attribute on a media element or the src attribute on a <source> element associated with a media element to mediaSourceURL

When the media element attempts the resource fetch algorithm with the URL from mediaSourceURL it will take one of the following actions:

sourceState is NOT set to SOURCE_CLOSED

MEDIA_ERR_SRC_NOT_SUPPORTED error.sourceState attribute to SOURCE_OPEN

sourceopen.sourceAppend()

The following steps are run in any case where the media element is going to transition to NETWORK_EMPTY and fire an emptied event. These steps should be run right before the transition.

sourceState attribute to SOURCE_CLOSED

sourceclose.seeking algorithm starts and has reached the stage where it is about to fire the seeking event.sourceState attribute is set to SOURCE_ENDED, then the media element sets the sourceState attribute to SOURCE_OPEN and fires a simple event named sourceopen

seeking algorithm fires the seeking eventreadyState attribute to HAVE_METADATA and fire the appropriate event for this transition.sourceAppend(). The web application can use sourceBuffered() to determine what the media element needs to resume playback.seeking algorithm and fires the seeked event indicating that the seek has completed.The following steps are periodically run during playback to make sure that all of the source buffers for the active IDs have enough data to ensure uninterrupted playback. Appending new segments and changing the set of active IDs also cause these steps to run because they affect the conditions that trigger state transitions. The web application can monitor changes in readyState to drive media segment appending.

sourceBuffered() for all active IDs does not contain TimeRanges for the current playback position:readyState attribute to HAVE_METADATA and fire the appropriate event for this transition.sourceBuffered() for all active IDs contain TimeRanges that include the current playback position and enough data to ensure uninterrupted playback:readyState attribute to HAVE_ENOUGH_DATA and fire the appropriate event for this transition.HAVE_CURRENT_DATA.sourceBuffered() for at least one active ID contains a TimeRange that includes the current playback position but not enough data to ensure uninterrupted playback:readyState attribute to HAVE_FUTURE_DATA and fire the appropriate event for this transition.HAVE_CURRENT_DATA.sourceBuffered() for at least one active ID contains a TimeRange that ends at the current playback position and does not have a range covering the time immediately after the current position:readyState attribute to HAVE_CURRENT_DATA and fire the appropriate event for this transition.The bytes provided through sourceAppend() for a source ID form a logical byte stream. The format of this byte stream depends on the media container format in use and is defined in a byte stream format specification. Byte stream format specifications based on WebM and the ISO Base Media File Format are provided below. If these formats are supported then the byte stream formats described below MUST be supported.

This section provides general requirements for all byte stream formats:

Byte stream specifications must at a minimum define constraints which ensure that the above requirements hold. Additional constraints may be defined, for example to simplify implementation.

Initialization segments are an optimization. They allow a byte stream format to avoid duplication of information in Media Segments that is the same for many Media Segments. Byte stream format specifications need not specify Initialization Segment formats, however. They may instead require that such information is duplicated in every Media Segment.

This section defines segment formats for implementations that choose to support WebM.

A WebM initialization segment must contain a subset of the elements at the start of a typical WebM file.

The following rules apply to WebM initialization segments:

A WebM media segment is a single Cluster element.

The following rules apply to WebM media segments:

The timestamp in the first block of the first media segment appended establishes the starting timestamp for the presentation timeline. All media segments appended after this first segment are expected to have timestamps greater than or equal to this timestamp.

If for some reason a web application doesn't want to append data at the beginning of the timeline, it can establish the starting timestamp by appending a Cluster element that only contains a Timecode element with the presentation start time. This must be done before any other media segments are appended.

A SimpleBlock element with its Keyframe flag set signals the location of a random access point for that track. Media segments containing multiple tracks are only considered a random access point if the first SimpleBlock for each track has its Keyframe flag set. The order of the multiplexed blocks should conform to the WebM Muxer Guidelines.

This section defines segment formats for implementations that choose to support the ISO Base Media File Format ISO/IEC 14496-12 (ISO BMFF).

An ISO BMFF initialization segment shall contain a single Movie Header Box (moov). The tracks in the Movie Header Box shall not contain any samples (i.e. the entry_count in the stts, stsc and stco boxes shall be set to zero). A Movie Extends (mvex) box shall be contained in the Movie Header Box to indicate that Movie Fragments are to be expected.

The initialization segment may contain Edit Boxes (edts) which provide a mapping of composition times for each track to the global presentation time.

An ISO BMFF media segment shall contain a single Movie Fragment Box (moof) followed by one or more Media Data Boxes (mdat).

The following rules shall apply to ISO BMFF media segments:

The Track Fragment Decode Time Box is defined in ISO/IEC 14496-12 Amendment 3.

The earliest presentation timestamp of any sample of the first media segment appended establishes the starting timestamp for the presentation timeline. All media segments appended after this first segment are expected to have presentation timestamps greater than or equal to this timestamp.

If for some reason a web application doesn't want to append data at the beginning of the timeline, it can establish the starting timestamp by appending a Movie Fragment Box containing a Track Fragment Box containing a Track Fragment Decode Time Box. The start time of the presentation is then the presentation time of a hypothetical sample with zero composition offset. This must be done before any other media segments are appended.

A random access point as defined in this specification corresponds to a Stream Access Point of type 1 or 2 as defined in Annex I of ISO/IEC 14496-12 Amendment 3.

Example use of the Media Source Extensions

<script>

var sourceID = "123";

function onOpen(e) {

var video = e.target;

var headers = GetStreamHeaders();

if (headers == null) {

// Error fetching headers. Signal end of stream with an error.

video.sourceEndOfStream(HTMLMediaElement.EOS_NETWORK_ERR);

}

video.sourceAddId(sourceID, 'video/webm; codecs="vorbis,vp8"');

// Append the stream headers (i.e. WebM Header, Info, and Tracks elements)

video.sourceAppend(sourceID, headers);

// Append some initial media data.

video.sourceAppend(sourceID, GetNextCluster());

}

function onSeeking(e) {

var video = e.target;

// Abort current segment append.

video.sourceAbort(sourceID);

// Notify the cluster loading code to start fetching data at the

// new playback position.

SeekToClusterAt(video.currentTime);

// Append clusters from the new playback position.

video.sourceAppend(sourceID, GetNextCluster());

video.sourceAppend(sourceID, GetNextCluster());

}

function onProgress(e) {

var video = e.target;

if (video.sourceState == video.SOURCE_ENDED)

return;

// If we have run out of stream data, then signal end of stream.

if (!HaveMoreClusters()) {

video.sourceEndOfStream(HTMLMediaElement.EOS_NO_ERROR);

return;

}

video.sourceAppend(sourceID, GetNextCluster());

}

var video = document.getElementById('v');

video.addEventListener('sourceopen', onOpen);

video.addEventListener('seeking', onSeeking);

video.addEventListener('progress', onProgress);

</script>

<video id="v" autoplay> </video>

<script>

var video = document.getElementById('v');

video.src = video.mediaSourceURL;

</script>

This section contains issues that have come up during the spec update process, but haven't been resolved yet. The issues and potential solutions are listed below. The potential solutions are just initial thoughts and have not been subjected to rigorous discussion yet.

Concerns were raised about limiting the amount of data that JavaScript can append on memory constrained devices. We have briefly discussed making sourceAppend() asynchronous and allowing an "append done" event to fire when the user agent is ready to accept more data.

Since user agents may have different limitations on how they handle media segment overlaps, the web application needs a mechanism to detect the user agent's capabilities. We have briefly discussed adding a parameter, similar to 'codec', to the MIME type passed to sourceAddId(). We have not discussed any specific details beyond that.

There have been discussions about changing sourceAppend() to take a URL and optional start & end offset parameters instead of a Uint8Array. The current byte stream specs don't really require access to the raw bytes and if JavaScript wants to construct its own stream from bytes it could use a BlobBuilder and a Blob URL. Going down this path removes the need for a streaming XHR API and could improve interactions with the browser cache. Download progress could be reported through an "append progress" event so that bitrate calculations could be made by JavaScript.

There have been some discussions about adding a method that applies an offset to the timestamps in media segments. Ad insertion and mashups where the content being appended does not have timestamps that match the desired location in the presentation timeline are the primary motivators for this feature. A method along the lines of sourceTimestampMapping(presentationTimestamp, segmentTimestamp) could specify the timestamp mapping to use for future media segments that get appended. The mapping would be applied at append time before the data goes into the source buffer. Adding a feature like this would prevent the web application from having to rewrite timestamps in the media segments. The exact semantics and details of this feature still need to be worked out.

The current text focuses on behavior for audio and video. Behavior for timed text still needs to be defined.

This proposal may overlap with portions of the Audio WG draft. Further investigation is needed around how these two proposals will work together.

Define how language and kind can be set on AudioTrack & VideoTrack objects. This information may be inside the manifest instead of initialization segments so we need a way for JavaScript to set this.

Specify a way to identify which source ID an AudioTrack or VideoTrack is associated with.

| Version | Comment |

|---|---|

| 0.5 | Minor updates before proposing to W3C HTML-WG. |

| 0.4 | Major revision. Adding source IDs, defining buffer model, and clarifying byte stream formats. |

| 0.3 | Minor text updates. |

| 0.2 | Updates to reflect initial WebKit implementation. |

| 0.1 | Initial Proposal |