Nested Resampling

In order to obtain honest performance estimates for a learner all parts of the model building like preprocessing and model selection steps should be included in the resampling, i.e., repeated for every pair of training/test data. For steps that themselves require resampling like parameter tuning or feature selection (via the wrapper approach) this results in two nested resampling loops.

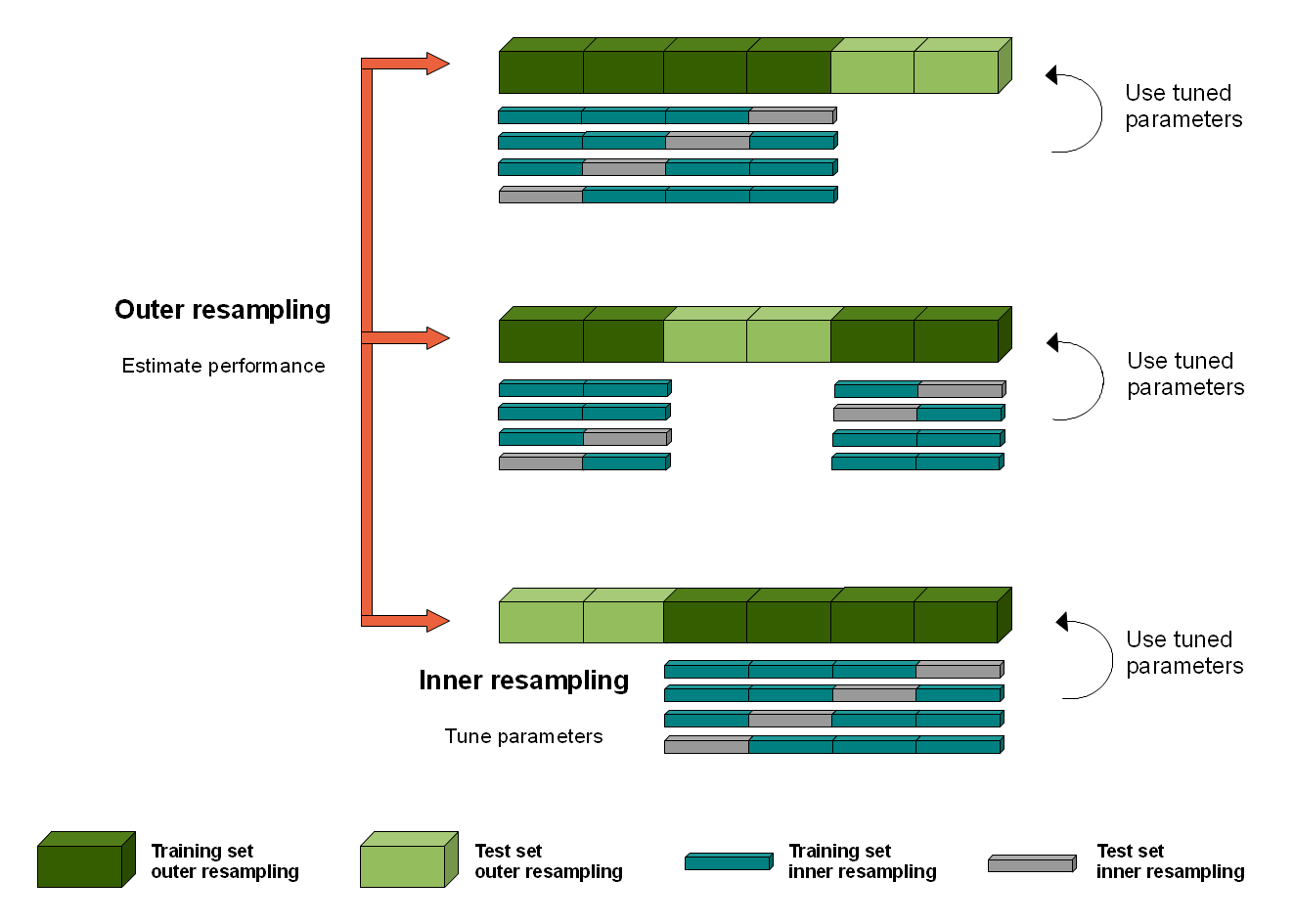

The graphic above illustrates nested resampling for parameter tuning with 3-fold cross-validation in the outer and 4-fold cross-validation in the inner loop.

In the outer resampling loop, we have three pairs of training/test sets. On each of these outer training sets parameter tuning is done, thereby executing the inner resampling loop. This way, we get one set of selected hyperparameters for each outer training set. Then the learner is fitted on each outer training set using the corresponding selected hyperparameters and its performance is evaluated on the outer test sets.

In mlr, you can get nested resampling for free without programming any looping by using the wrapper functionality. This works as follows:

- Generate a wrapped Learner via function makeTuneWrapper or makeFeatSelWrapper.

Specify the inner resampling strategy using their

resamplingargument. - Call function resample (see also the section about resampling) and

pass the outer resampling strategy to its

resamplingargument.

You can freely combine different inner and outer resampling strategies.

The outer strategy can be a resample description (ResampleDesc) or a resample instance (ResampleInstance). A common setup is prediction and performance evaluation on a fixed outer test set. This can be achieved by using function makeFixedHoldoutInstance to generate the outer ResampleInstance.

The inner resampling strategy should preferably be a ResampleDesc, as the sizes

of the outer training sets might differ.

Per default, the inner resample description is instantiated once for every outer training set.

This way during tuning/feature selection all parameter or feature sets are compared

on the same inner training/test sets to reduce variance.

You can also turn this off using the same.resampling.instance argument of makeTuneControl*

or makeFeatSelControl*.

Nested resampling is computationally expensive. For this reason in the examples shown below we use relatively small search spaces and a low number of resampling iterations. In practice, you normally have to increase both. As this is computationally intensive you might want to have a look at section parallelization.

Tuning

As you might recall from the tutorial page about tuning, you need to define a search space by function makeParamSet, a search strategy by makeTuneControl*, and a method to evaluate hyperparameter settings (i.e., the inner resampling strategy and a performance measure).

Below is a classification example.

We evaluate the performance of a support vector machine (ksvm) with tuned

cost parameter C and RBF kernel parameter sigma.

We use 3-fold cross-validation in the outer and subsampling with 2 iterations in the inner

loop.

For tuning a grid search is used to find the hyperparameters with lowest error rate

(mmce is the default measure for classification).

The wrapped Learner is generated by calling makeTuneWrapper.

Note that in practice the parameter set should be larger.

A common recommendation is 2^(-12:12) for both C and sigma.

## Tuning in inner resampling loop

ps = makeParamSet(

makeDiscreteParam("C", values = 2^(-2:2)),

makeDiscreteParam("sigma", values = 2^(-2:2))

)

ctrl = makeTuneControlGrid()

inner = makeResampleDesc("Subsample", iters = 2)

lrn = makeTuneWrapper("classif.ksvm", resampling = inner, par.set = ps, control = ctrl, show.info = FALSE)

## Outer resampling loop

outer = makeResampleDesc("CV", iters = 3)

r = resample(lrn, iris.task, resampling = outer, extract = getTuneResult, show.info = FALSE)

r

#> Resample Result

#> Task: iris-example

#> Learner: classif.ksvm.tuned

#> Aggr perf: mmce.test.mean=0.0533333

#> Runtime: 3.93526

You can obtain the error rates on the 3 outer test sets by:

r$measures.test

#> iter mmce

#> 1 1 0.02

#> 2 2 0.06

#> 3 3 0.08

Accessing the tuning result

We have kept the results of the tuning for further evaluations.

For example one might want to find out, if the best obtained configurations vary for the

different outer splits.

As storing entire models may be expensive (but possible by setting models = TRUE) we used

the extract option of resample.

Function getTuneResult returns, among other things, the optimal hyperparameter values and

the optimization path for each iteration of the outer resampling loop.

Note that the performance values shown when printing r$extract are the aggregated performances

resulting from inner resampling on the outer training set for the best hyperparameter configurations

(not to be confused with r$measures.test shown above).

r$extract

#> [[1]]

#> Tune result:

#> Op. pars: C=2; sigma=0.25

#> mmce.test.mean=0.0147059

#>

#> [[2]]

#> Tune result:

#> Op. pars: C=4; sigma=0.25

#> mmce.test.mean=0.0000000

#>

#> [[3]]

#> Tune result:

#> Op. pars: C=4; sigma=0.25

#> mmce.test.mean=0.0735294

names(r$extract[[1]])

#> [1] "learner" "control" "x" "y" "threshold" "opt.path"

We can compare the optimal parameter settings obtained in the 3 resampling iterations.

As you can see, the optimal configuration usually depends on the data. You may

be able to identify a range of parameter settings that achieve good

performance though, e.g., the values for C should be at least 1 and the values

for sigma should be between 0 and 1.

With function getNestedTuneResultsOptPathDf you can extract the optimization paths

for the 3 outer cross-validation iterations for further inspection and analysis.

These are stacked in one data.frame with column iter indicating the

resampling iteration.

opt.paths = getNestedTuneResultsOptPathDf(r)

head(opt.paths, 10)

#> C sigma mmce.test.mean dob eol error.message exec.time iter

#> 1 0.25 0.25 0.05882353 1 NA <NA> 0.044 1

#> 2 0.5 0.25 0.04411765 2 NA <NA> 0.050 1

#> 3 1 0.25 0.04411765 3 NA <NA> 0.052 1

#> 4 2 0.25 0.01470588 4 NA <NA> 0.430 1

#> 5 4 0.25 0.05882353 5 NA <NA> 0.037 1

#> 6 0.25 0.5 0.05882353 6 NA <NA> 0.040 1

#> 7 0.5 0.5 0.01470588 7 NA <NA> 0.039 1

#> 8 1 0.5 0.02941176 8 NA <NA> 0.038 1

#> 9 2 0.5 0.01470588 9 NA <NA> 0.039 1

#> 10 4 0.5 0.05882353 10 NA <NA> 0.038 1

Below we visualize the opt.paths for the 3 outer resampling iterations.

g = ggplot(opt.paths, aes(x = C, y = sigma, fill = mmce.test.mean))

g + geom_tile() + facet_wrap(~ iter)

Another useful function is getNestedTuneResultsX, which extracts the best found hyperparameter settings for each outer resampling iteration.

getNestedTuneResultsX(r)

#> C sigma

#> 1 2 0.25

#> 2 4 0.25

#> 3 4 0.25

Feature selection

As you might recall from the section about feature selection, mlr supports the filter and the wrapper approach.

Wrapper methods

Wrapper methods use the performance of a learning algorithm to assess the usefulness of a feature set. In order to select a feature subset a learner is trained repeatedly on different feature subsets and the subset which leads to the best learner performance is chosen.

For feature selection in the inner resampling loop, you need to choose a search strategy (function makeFeatSelControl*), a performance measure and the inner resampling strategy. Then use function makeFeatSelWrapper to bind everything together.

Below we use sequential forward selection with linear regression on the BostonHousing data set (bh.task).

## Feature selection in inner resampling loop

inner = makeResampleDesc("CV", iters = 3)

lrn = makeFeatSelWrapper("regr.lm", resampling = inner,

control = makeFeatSelControlSequential(method = "sfs"), show.info = FALSE)

## Outer resampling loop

outer = makeResampleDesc("Subsample", iters = 2)

r = resample(learner = lrn, task = bh.task, resampling = outer, extract = getFeatSelResult,

show.info = FALSE)

r

#> Resample Result

#> Task: BostonHousing-example

#> Learner: regr.lm.featsel

#> Aggr perf: mse.test.mean=31.6991293

#> Runtime: 9.86819

r$measures.test

#> iter mse

#> 1 1 35.08611

#> 2 2 28.31215

Accessing the selected features

The result of the feature selection can be extracted by function getFeatSelResult.

It is also possible to keep whole models by setting models = TRUE

when calling resample.

r$extract

#> [[1]]

#> FeatSel result:

#> Features (10): crim, zn, indus, nox, rm, dis, rad, tax, ptratio, lstat

#> mse.test.mean=20.1593896

#>

#> [[2]]

#> FeatSel result:

#> Features (9): zn, nox, rm, dis, rad, tax, ptratio, b, lstat

#> mse.test.mean=22.5966796

## Selected features in the first outer resampling iteration

r$extract[[1]]$x

#> [1] "crim" "zn" "indus" "nox" "rm" "dis" "rad"

#> [8] "tax" "ptratio" "lstat"

## Resampled performance of the selected feature subset on the first inner training set

r$extract[[1]]$y

#> mse.test.mean

#> 20.15939

As for tuning, you can extract the optimization paths. The resulting data.frames contain, among others, binary columns for all features, indicating if they were included in the linear regression model, and the corresponding performances.

opt.paths = lapply(r$extract, function(x) as.data.frame(x$opt.path))

head(opt.paths[[1]])

#> crim zn indus chas nox rm age dis rad tax ptratio b lstat mse.test.mean

#> 1 0 0 0 0 0 0 0 0 0 0 0 0 0 80.33019

#> 2 1 0 0 0 0 0 0 0 0 0 0 0 0 65.95316

#> 3 0 1 0 0 0 0 0 0 0 0 0 0 0 69.15417

#> 4 0 0 1 0 0 0 0 0 0 0 0 0 0 55.75473

#> 5 0 0 0 1 0 0 0 0 0 0 0 0 0 80.48765

#> 6 0 0 0 0 1 0 0 0 0 0 0 0 0 63.06724

#> dob eol error.message exec.time

#> 1 1 2 <NA> 0.035

#> 2 2 2 <NA> 0.055

#> 3 2 2 <NA> 0.071

#> 4 2 2 <NA> 0.055

#> 5 2 2 <NA> 0.062

#> 6 2 2 <NA> 0.057

An easy-to-read version of the optimization path for sequential feature selection can be obtained with function analyzeFeatSelResult.

analyzeFeatSelResult(r$extract[[1]])

#> Features : 10

#> Performance : mse.test.mean=20.1593896

#> crim, zn, indus, nox, rm, dis, rad, tax, ptratio, lstat

#>

#> Path to optimum:

#> - Features: 0 Init : Perf = 80.33 Diff: NA *

#> - Features: 1 Add : lstat Perf = 36.451 Diff: 43.879 *

#> - Features: 2 Add : rm Perf = 27.289 Diff: 9.1623 *

#> - Features: 3 Add : ptratio Perf = 24.004 Diff: 3.2849 *

#> - Features: 4 Add : nox Perf = 23.513 Diff: 0.49082 *

#> - Features: 5 Add : dis Perf = 21.49 Diff: 2.023 *

#> - Features: 6 Add : crim Perf = 21.12 Diff: 0.37008 *

#> - Features: 7 Add : indus Perf = 20.82 Diff: 0.29994 *

#> - Features: 8 Add : rad Perf = 20.609 Diff: 0.21054 *

#> - Features: 9 Add : tax Perf = 20.209 Diff: 0.40059 *

#> - Features: 10 Add : zn Perf = 20.159 Diff: 0.049441 *

#>

#> Stopped, because no improving feature was found.

Filter methods with tuning

Filter methods assign an importance value to each feature. Based on these values you can select a feature subset by either keeping all features with importance higher than a certain threshold or by keeping a fixed number or percentage of the highest ranking features. Often, neither the theshold nor the number or percentage of features is known in advance and thus tuning is necessary.

In the example below the threshold value (fw.threshold) is tuned in the inner resampling loop.

For this purpose the base Learner "regr.lm" is wrapped two times.

First, makeFilterWrapper is used to fuse linear regression with a feature filtering

preprocessing step. Then a tuning step is added by makeTuneWrapper.

## Tuning of the percentage of selected filters in the inner loop

lrn = makeFilterWrapper(learner = "regr.lm", fw.method = "chi.squared")

ps = makeParamSet(makeDiscreteParam("fw.threshold", values = seq(0, 1, 0.2)))

ctrl = makeTuneControlGrid()

inner = makeResampleDesc("CV", iters = 3)

lrn = makeTuneWrapper(lrn, resampling = inner, par.set = ps, control = ctrl, show.info = FALSE)

## Outer resampling loop

outer = makeResampleDesc("CV", iters = 3)

r = resample(learner = lrn, task = bh.task, resampling = outer, models = TRUE, show.info = FALSE)

r

#> Resample Result

#> Task: BostonHousing-example

#> Learner: regr.lm.filtered.tuned

#> Aggr perf: mse.test.mean=25.3915155

#> Runtime: 4.05703

Accessing the selected features and optimal percentage

In the above example we kept the complete models.

Below are some examples that show how to extract information from the models.

r$models

#> [[1]]

#> Model for learner.id=regr.lm.filtered.tuned; learner.class=TuneWrapper

#> Trained on: task.id = BostonHousing-example; obs = 337; features = 13

#> Hyperparameters: fw.method=chi.squared

#>

#> [[2]]

#> Model for learner.id=regr.lm.filtered.tuned; learner.class=TuneWrapper

#> Trained on: task.id = BostonHousing-example; obs = 338; features = 13

#> Hyperparameters: fw.method=chi.squared

#>

#> [[3]]

#> Model for learner.id=regr.lm.filtered.tuned; learner.class=TuneWrapper

#> Trained on: task.id = BostonHousing-example; obs = 337; features = 13

#> Hyperparameters: fw.method=chi.squared

The result of the feature selection can be extracted by function getFilteredFeatures. Almost always all 13 features are selected.

lapply(r$models, function(x) getFilteredFeatures(x$learner.model$next.model))

#> [[1]]

#> [1] "crim" "zn" "indus" "chas" "nox" "rm" "age"

#> [8] "dis" "rad" "tax" "ptratio" "b" "lstat"

#>

#> [[2]]

#> [1] "crim" "zn" "indus" "nox" "rm" "age" "dis"

#> [8] "rad" "tax" "ptratio" "b" "lstat"

#>

#> [[3]]

#> [1] "crim" "zn" "indus" "chas" "nox" "rm" "age"

#> [8] "dis" "rad" "tax" "ptratio" "b" "lstat"

Below the tune results and optimization paths are accessed.

res = lapply(r$models, getTuneResult)

res

#> [[1]]

#> Tune result:

#> Op. pars: fw.threshold=0

#> mse.test.mean=24.8915992

#>

#> [[2]]

#> Tune result:

#> Op. pars: fw.threshold=0.4

#> mse.test.mean=27.1812625

#>

#> [[3]]

#> Tune result:

#> Op. pars: fw.threshold=0

#> mse.test.mean=19.7012695

opt.paths = lapply(res, function(x) as.data.frame(x$opt.path))

opt.paths[[1]]

#> fw.threshold mse.test.mean dob eol error.message exec.time

#> 1 0 24.89160 1 NA <NA> 0.213

#> 2 0.2 25.18817 2 NA <NA> 0.214

#> 3 0.4 25.18817 3 NA <NA> 0.203

#> 4 0.6 32.15930 4 NA <NA> 0.198

#> 5 0.8 90.89848 5 NA <NA> 0.197

#> 6 1 90.89848 6 NA <NA> 0.185

Benchmark experiments

In a benchmark experiment multiple learners are compared on one or several tasks (see also the section about benchmarking). Nested resampling in benchmark experiments is achieved the same way as in resampling:

- First, use makeTuneWrapper or makeFeatSelWrapper to generate wrapped Learners with the inner resampling strategies of your choice.

- Second, call benchmark and specify the outer resampling strategies for all tasks.

The inner resampling strategies should be resample descriptions. You can use different inner resampling strategies for different wrapped learners. For example it might be practical to do fewer subsampling or bootstrap iterations for slower learners.

If you have larger benchmark experiments you might want to have a look at the section about parallelization.

As mentioned in the section about benchmark experiments you can also use different resampling strategies for different learning tasks by passing a list of resampling descriptions or instances to benchmark.

We will see three examples to show different benchmark settings:

- Two data sets + two classification algorithms + tuning

- One data set + two regression algorithms + feature selection

- One data set + two regression algorithms + feature filtering + tuning

Example 1: Two tasks, two learners, tuning

Below is a benchmark experiment with two data sets, iris and sonar, and two Learners, ksvm and kknn, that are both tuned.

As inner resampling strategies we use holdout for ksvm and subsampling with 3 iterations for kknn. As outer resampling strategies we take holdout for the iris and bootstrap with 2 iterations for the sonar data (sonar.task). We consider the accuracy (acc), which is used as tuning criterion, and also calculate the balanced error rate (ber).

## List of learning tasks

tasks = list(iris.task, sonar.task)

## Tune svm in the inner resampling loop

ps = makeParamSet(

makeDiscreteParam("C", 2^(-1:1)),

makeDiscreteParam("sigma", 2^(-1:1)))

ctrl = makeTuneControlGrid()

inner = makeResampleDesc("Holdout")

lrn1 = makeTuneWrapper("classif.ksvm", resampling = inner, par.set = ps, control = ctrl,

show.info = FALSE)

## Tune k-nearest neighbor in inner resampling loop

ps = makeParamSet(makeDiscreteParam("k", 3:5))

ctrl = makeTuneControlGrid()

inner = makeResampleDesc("Subsample", iters = 3)

lrn2 = makeTuneWrapper("classif.kknn", resampling = inner, par.set = ps, control = ctrl,

show.info = FALSE)

## Learners

lrns = list(lrn1, lrn2)

## Outer resampling loop

outer = list(makeResampleDesc("Holdout"), makeResampleDesc("Bootstrap", iters = 2))

res = benchmark(lrns, tasks, outer, measures = list(acc, ber), show.info = FALSE)

res

#> task.id learner.id acc.test.mean ber.test.mean

#> 1 iris-example classif.ksvm.tuned 0.9400000 0.05882353

#> 2 iris-example classif.kknn.tuned 0.9200000 0.08683473

#> 3 Sonar-example classif.ksvm.tuned 0.5289307 0.50000000

#> 4 Sonar-example classif.kknn.tuned 0.8077080 0.19549714

The print method for the BenchmarkResult shows the aggregated performances from the outer resampling loop.

As you might recall, mlr offers several accessor function to extract information from the benchmark result. These are listed on the help page of BenchmarkResult and many examples are shown on the tutorial page about benchmark experiments.

The performance values in individual outer resampling runs can be obtained by getBMRPerformances. Note that, since we used different outer resampling strategies for the two tasks, the number of rows per task differ.

getBMRPerformances(res, as.df = TRUE)

#> task.id learner.id iter acc ber

#> 1 iris-example classif.ksvm.tuned 1 0.9400000 0.05882353

#> 2 iris-example classif.kknn.tuned 1 0.9200000 0.08683473

#> 3 Sonar-example classif.ksvm.tuned 1 0.5373134 0.50000000

#> 4 Sonar-example classif.ksvm.tuned 2 0.5205479 0.50000000

#> 5 Sonar-example classif.kknn.tuned 1 0.8208955 0.18234767

#> 6 Sonar-example classif.kknn.tuned 2 0.7945205 0.20864662

The results from the parameter tuning can be obtained through function getBMRTuneResults.

getBMRTuneResults(res)

#> $`iris-example`

#> $`iris-example`$classif.ksvm.tuned

#> $`iris-example`$classif.ksvm.tuned[[1]]

#> Tune result:

#> Op. pars: C=0.5; sigma=0.5

#> mmce.test.mean=0.0588235

#>

#>

#> $`iris-example`$classif.kknn.tuned

#> $`iris-example`$classif.kknn.tuned[[1]]

#> Tune result:

#> Op. pars: k=3

#> mmce.test.mean=0.0490196

#>

#>

#>

#> $`Sonar-example`

#> $`Sonar-example`$classif.ksvm.tuned

#> $`Sonar-example`$classif.ksvm.tuned[[1]]

#> Tune result:

#> Op. pars: C=1; sigma=2

#> mmce.test.mean=0.3428571

#>

#> $`Sonar-example`$classif.ksvm.tuned[[2]]

#> Tune result:

#> Op. pars: C=2; sigma=0.5

#> mmce.test.mean=0.2000000

#>

#>

#> $`Sonar-example`$classif.kknn.tuned

#> $`Sonar-example`$classif.kknn.tuned[[1]]

#> Tune result:

#> Op. pars: k=4

#> mmce.test.mean=0.1095238

#>

#> $`Sonar-example`$classif.kknn.tuned[[2]]

#> Tune result:

#> Op. pars: k=3

#> mmce.test.mean=0.0666667

As for several other accessor functions a clearer representation as data.frame

can be achieved by setting as.df = TRUE.

getBMRTuneResults(res, as.df = TRUE)

#> task.id learner.id iter C sigma mmce.test.mean k

#> 1 iris-example classif.ksvm.tuned 1 0.5 0.5 0.05882353 NA

#> 2 iris-example classif.kknn.tuned 1 NA NA 0.04901961 3

#> 3 Sonar-example classif.ksvm.tuned 1 1.0 2.0 0.34285714 NA

#> 4 Sonar-example classif.ksvm.tuned 2 2.0 0.5 0.20000000 NA

#> 5 Sonar-example classif.kknn.tuned 1 NA NA 0.10952381 4

#> 6 Sonar-example classif.kknn.tuned 2 NA NA 0.06666667 3

It is also possible to extract the tuning results for individual tasks and learners and, as shown in earlier examples, inspect the optimization path.

tune.res = getBMRTuneResults(res, task.ids = "Sonar-example", learner.ids = "classif.ksvm.tuned",

as.df = TRUE)

tune.res

#> task.id learner.id iter C sigma mmce.test.mean

#> 1 Sonar-example classif.ksvm.tuned 1 1 2.0 0.3428571

#> 2 Sonar-example classif.ksvm.tuned 2 2 0.5 0.2000000

getNestedTuneResultsOptPathDf(res$results[["Sonar-example"]][["classif.ksvm.tuned"]])

Example 2: One task, two learners, feature selection

Let's see how we can do feature selection in a benchmark experiment:

## Feature selection in inner resampling loop

ctrl = makeFeatSelControlSequential(method = "sfs")

inner = makeResampleDesc("Subsample", iters = 2)

lrn = makeFeatSelWrapper("regr.lm", resampling = inner, control = ctrl, show.info = FALSE)

## Learners

lrns = list("regr.rpart", lrn)

## Outer resampling loop

outer = makeResampleDesc("Subsample", iters = 2)

res = benchmark(tasks = bh.task, learners = lrns, resampling = outer, show.info = FALSE)

res

#> task.id learner.id mse.test.mean

#> 1 BostonHousing-example regr.rpart 25.86232

#> 2 BostonHousing-example regr.lm.featsel 25.07465

The selected features can be extracted by function getBMRFeatSelResults.

By default, a nested list, with the first level indicating the task and the

second level indicating the learner, is returned.

If only a single learner or, as in our case, a single task is considered, setting

drop = TRUE simplifies the result to a flat list.

getBMRFeatSelResults(res)

#> $`BostonHousing-example`

#> $`BostonHousing-example`$regr.rpart

#> NULL

#>

#> $`BostonHousing-example`$regr.lm.featsel

#> $`BostonHousing-example`$regr.lm.featsel[[1]]

#> FeatSel result:

#> Features (8): crim, zn, chas, nox, rm, dis, ptratio, lstat

#> mse.test.mean=26.7257383

#>

#> $`BostonHousing-example`$regr.lm.featsel[[2]]

#> FeatSel result:

#> Features (10): crim, zn, nox, rm, dis, rad, tax, ptratio, b, lstat

#> mse.test.mean=24.2874464

getBMRFeatSelResults(res, drop = TRUE)

#> $regr.rpart

#> NULL

#>

#> $regr.lm.featsel

#> $regr.lm.featsel[[1]]

#> FeatSel result:

#> Features (8): crim, zn, chas, nox, rm, dis, ptratio, lstat

#> mse.test.mean=26.7257383

#>

#> $regr.lm.featsel[[2]]

#> FeatSel result:

#> Features (10): crim, zn, nox, rm, dis, rad, tax, ptratio, b, lstat

#> mse.test.mean=24.2874464

You can access results for individual learners and tasks and inspect them further.

feats = getBMRFeatSelResults(res, learner.id = "regr.lm.featsel", drop = TRUE)

## Selected features in the first outer resampling iteration

feats[[1]]$x

#> [1] "crim" "zn" "chas" "nox" "rm" "dis" "ptratio"

#> [8] "lstat"

## Resampled performance of the selected feature subset on the first inner training set

feats[[1]]$y

#> mse.test.mean

#> 26.72574

As for tuning, you can extract the optimization paths. The resulting data.frames contain, among others, binary columns for all features, indicating if they were included in the linear regression model, and the corresponding performances. analyzeFeatSelResult gives a clearer overview.

opt.paths = lapply(feats, function(x) as.data.frame(x$opt.path))

head(opt.paths[[1]])

#> crim zn indus chas nox rm age dis rad tax ptratio b lstat mse.test.mean

#> 1 0 0 0 0 0 0 0 0 0 0 0 0 0 90.16159

#> 2 1 0 0 0 0 0 0 0 0 0 0 0 0 82.85880

#> 3 0 1 0 0 0 0 0 0 0 0 0 0 0 79.55202

#> 4 0 0 1 0 0 0 0 0 0 0 0 0 0 70.02071

#> 5 0 0 0 1 0 0 0 0 0 0 0 0 0 86.93409

#> 6 0 0 0 0 1 0 0 0 0 0 0 0 0 76.32457

#> dob eol error.message exec.time

#> 1 1 2 <NA> 0.014

#> 2 2 2 <NA> 0.022

#> 3 2 2 <NA> 0.021

#> 4 2 2 <NA> 0.021

#> 5 2 2 <NA> 0.023

#> 6 2 2 <NA> 0.022

analyzeFeatSelResult(feats[[1]])

#> Features : 8

#> Performance : mse.test.mean=26.7257383

#> crim, zn, chas, nox, rm, dis, ptratio, lstat

#>

#> Path to optimum:

#> - Features: 0 Init : Perf = 90.162 Diff: NA *

#> - Features: 1 Add : lstat Perf = 42.646 Diff: 47.515 *

#> - Features: 2 Add : ptratio Perf = 34.52 Diff: 8.1263 *

#> - Features: 3 Add : rm Perf = 30.454 Diff: 4.066 *

#> - Features: 4 Add : dis Perf = 29.405 Diff: 1.0495 *

#> - Features: 5 Add : nox Perf = 28.059 Diff: 1.3454 *

#> - Features: 6 Add : chas Perf = 27.334 Diff: 0.72499 *

#> - Features: 7 Add : zn Perf = 26.901 Diff: 0.43296 *

#> - Features: 8 Add : crim Perf = 26.726 Diff: 0.17558 *

#>

#> Stopped, because no improving feature was found.

Example 3: One task, two learners, feature filtering with tuning

Here is a minimal example for feature filtering with tuning of the feature subset size.

## Feature filtering with tuning in the inner resampling loop

lrn = makeFilterWrapper(learner = "regr.lm", fw.method = "chi.squared")

ps = makeParamSet(makeDiscreteParam("fw.abs", values = seq_len(getTaskNFeats(bh.task))))

ctrl = makeTuneControlGrid()

inner = makeResampleDesc("CV", iter = 2)

lrn = makeTuneWrapper(lrn, resampling = inner, par.set = ps, control = ctrl,

show.info = FALSE)

## Learners

lrns = list("regr.rpart", lrn)

## Outer resampling loop

outer = makeResampleDesc("Subsample", iter = 3)

res = benchmark(tasks = bh.task, learners = lrns, resampling = outer, show.info = FALSE)

res

#> task.id learner.id mse.test.mean

#> 1 BostonHousing-example regr.rpart 22.11687

#> 2 BostonHousing-example regr.lm.filtered.tuned 23.76666

## Performances on individual outer test data sets

getBMRPerformances(res, as.df = TRUE)

#> task.id learner.id iter mse

#> 1 BostonHousing-example regr.rpart 1 23.55486

#> 2 BostonHousing-example regr.rpart 2 20.03453

#> 3 BostonHousing-example regr.rpart 3 22.76121

#> 4 BostonHousing-example regr.lm.filtered.tuned 1 27.51086

#> 5 BostonHousing-example regr.lm.filtered.tuned 2 24.87820

#> 6 BostonHousing-example regr.lm.filtered.tuned 3 18.91091