This document defines a set of JavaScript APIs that allow local media,

including audio and video, to be requested from a platform.

This document is not complete. It is subject to major changes and, while

early experimentations are encouraged, it is therefore not intended for

implementation. The API is based on preliminary work done in the

WHATWG.

Terminology

- HTML Terms:

-

The EventHandler

interface represents a callback used for event handlers as defined in

[[!HTML5]].

The concepts queue a

task and fires

a simple event are defined in [[!HTML5]].

The terms event

handlers and

event handler event types are defined in [[!HTML5]].

DOMException-

The term

DOMException is defined in WebIDL

[[!WEBIDL-1]]

- source

-

A source is the "thing" providing the source of a media stream

track. The source is the broadcaster of the media itself. A source can

be a physical webcam, microphone, local video or audio file from the

user's hard drive, network resource, or static image. Note that this

document describes the use of microphone and camera type sources only,

the use of other source types is described in other documents.

An application that has no prior authorization regarding sources is

only given the number of available sources, their type and any

relationship to other devices. Additional information about sources can

become available when applications are authorized to use a source (see

).

Sources do not have constraints — tracks have

constraints. When a source is connected to a track, it must produce

media that conforms to the constraints present on that track. Multiple

tracks can be attached to the same source. User Agent processing, such

as downsampling, MAY be used to ensure that all tracks have appropriate

media.

Sources have constrainable properties which have

capabilities and settings. The

constrainable properties are "owned" by the source and are common to

any (multiple) tracks that happen to be using the same source (e.g., if

two different track objects bound to the same source ask for the same

capability or setting information, they will get back the same

answer).

- Setting (Source Setting)

-

A setting refers to the immediate, current

value of the source's constrainable properties. Settings are always

read-only.

A source's settings can change dynamically over time due to

environmental conditions, sink configurations, or constraint changes. A

source's settings must always conform to the current set of mandatory

constraints on all attached tracks. A source that cannot conform to

mandatory constraints causes affected tracks to become

overconstrained and therefore muted.

A User Agent attempts to ensure that sources adhere to optional

constraints as closely as possible, see .

Although settings are a property of the source, they are only

exposed to the application through the tracks attached to the source.

This is exposed via the ConstrainablePattern interface.

- Capabilities

-

For each constrainable property, there is a capability that

describes whether it is supported by the source and if so, the range of

supported values. As with settings, capabilities are exposed to the

application via the ConstrainablePattern interface.

The values of the supported capabilities must be normalized to the

ranges and enumerated types defined in this specification.

A getCapabilities() call on a track returns the same

underlying per-source capabilities for all tracks connected to the

source.

Source capabilities are effectively constant. Applications should be

able to depend on a specific source having the same capabilities for

any browsing session.

This API is intentionally simplified. Capabilities are not capable

of describing interactions between different values. For instance, it

is not possible to accurately describe the capabilities of a camera

that can produce a high resolution video stream at a low frame rate and

lower resolutions at a higher frame rate. Capabilities describe the

complete range of each value. Interactions between constraints are

exposed by attempting to apply constraints.

- Constraints

-

Constraints provide a general control surface that allows

applications to both select an appropriate source for a track and, once

selected, to influence how a source operates.

Constraints limit the range of operating modes that a source can use

when providing media for a track. Without provided track constraints,

implementations are free to select a source's settings from the full

ranges of its supported capabilities. Implementations may also adjust

source settings at any time within the bounds imposed by all applied

constraints.

getUserMedia() uses constraints to help select an appropriate

source for a track and configure it. Additionally, the

ConstrainablePattern interface on tracks includes an API for

dynamically changing the track's constraints at any later time.

A track will not be connected to a source using

getUserMedia() if its initial constraints cannot be satisfied.

However, the ability to meet the constraints on a track can change over

time, and constraints can be changed. If circumstances change such that

constraints cannot be met, the ConstrainablePattern interface

defines an appropriate error to inform the application. explains how

constraints interact in more detail.

In general, User Agents will have more flexibility to optimize the

media streaming experience the fewer constraints are applied, so

application authors are strongly encouraged to use mandatory

constraints sparingly.

For each constrainable property, a constraint exists whose name

corresponds with the relevant source setting name and capability

name.

RTCPeerConnectionRTCPeerConnection is defined in

[[WEBRTC10]].

MediaStream API

Introduction

The two main components in the MediaStream API are the

MediaStreamTrack and MediaStream

interfaces. The MediaStreamTrack object represents

media of a single type that originates from one media source in the User

Agent, e.g. video produced by a web camera. A

MediaStream is used to group several

MediaStreamTrack objects into one unit that can be

recorded or rendered in a media element.

Each MediaStream can contain zero or more

MediaStreamTrack objects. All tracks in a

MediaStream are intended to be synchronized when

rendered. This is not a hard requirement, since it might not be possible

to synchronize tracks from sources that have different clocks. Different

MediaStream objects do not need to be

synchronized.

While the intent is to synchronize tracks, it could be

better in some circumstances to permit tracks to lose synchronization. In

particular, when tracks are remotely sourced and real-time [[WEBRTC10]],

it can be better to allow loss of synchronization than to accumulate

delays or risk glitches and other artifacts. Implementations are expected

to understand the implications of choices regarding synchronization of

playback and the effect that these have on user perception.

A single MediaStreamTrack can represent

multi-channel content, such as stereo or 5.1 audio or stereoscopic video,

where the channels have a well defined relationship to each other.

Information about channels might be exposed through other APIs, such as

[[WEBAUDIO]], but this specification provides no direct access to

channels.

A MediaStream object has an input and an output

that represent the combined input and output of all the object's tracks.

The output of the MediaStream controls how the object

is rendered, e.g., what is saved if the object is recorded to a file or

what is displayed if the object is used in a video element.

A single MediaStream object can be attached to

multiple different outputs at the same time.

A new MediaStream object can be created from

existing media streams or tracks using the MediaStream() constructor. The constructor

argument can either be an existing MediaStream

object, in which case all the tracks of the given stream are added to the

new MediaStream object, or an array of

MediaStreamTrack objects. The latter form makes it

possible to compose a stream from different source streams.

Both MediaStream and

MediaStreamTrack objects can be cloned. A cloned

MediaStream contains clones of all member tracks from

the original stream. A cloned MediaStreamTrack has a

set of constraints that is

independent of the instance it is cloned from, which allows media from

the same source to have different constraints applied for different

consumers. The MediaStream object is also used in

contexts outside getUserMedia, such as [[WEBRTC10]].

MediaStream

The MediaStream()

constructor composes a new stream out of existing tracks. It takes an

optional argument of type MediaStream or an array of

MediaStreamTrack objects. When the constructor is invoked, the User

Agent must run the following steps:

-

Let stream be a newly constructed

MediaStream object.

-

Initialize stream's id attribute to a newly generated

value.

-

If the constructor's argument is present, construct a set of

tracks, tracks based on the type of argument:

-

Add each track in

tracks to stream.

-

Return stream.

The tracks of a MediaStream are stored in a

track set. The track set MUST contain the

MediaStreamTrack objects that correspond to the

tracks of the stream. The relative order of the tracks in the set is User

Agent defined and the API will never put any requirements on the order.

The proper way to find a specific MediaStreamTrack

object in the set is to look it up by its id.

An object that reads data from the output of a

MediaStream is referred to as a

MediaStream consumer. The list of

MediaStream consumers currently include media

elements (such as <video> and

<audio>) [[HTML5]], Web Real-Time Communications

(WebRTC; RTCPeerConnection) [[WEBRTC10]], media recording

(MediaRecorder) [[mediastream-recording]], image capture

(ImageCapture) [[image-capture]], and web audio

(MediaStreamAudioSourceNode) [[WEBAUDIO]].

MediaStream consumers must be able to

handle tracks being added and removed. This behavior is specified per

consumer.

A MediaStream object is said to be active when it has at least one

MediaStreamTrack that has not ended. A MediaStream that does not

have any tracks or only has tracks that are ended is inactive.

To add a track to a

MediaStream, the User Agent MUST run the following

steps:

-

Let track be the MediaStreamTrack

in question and stream the MediaStream

object to which track is to be added.

-

If track is already in stream's track set, then abort these steps.

-

Add track to stream's track set.

To remove a track from a

MediaStream, the User Agent MUST run the following

steps:

-

Let track be the MediaStreamTrack

in question and stream the MediaStream

object from which track is to be removed.

-

If track is not in stream's track set, then abort these steps.

-

Remove track from stream's track set.

The User Agent may update a MediaStream's track set in response to, for example, an external

event. This specification does not specify any such cases, but other

specifications using the MediaStream API may. One such example is the

WebRTC 1.0 [[WEBRTC10]] specification where the track set of a MediaStream, received

from another peer, can be updated as a result of changes to the media

session.

When the User Agent initiates adding a track to a

MediaStream, with the exception of initializing a

newly created MediaStream with tracks, the User Agent

MUST queue a task that runs the following steps:

-

Let track be the MediaStreamTrack

in question and stream the MediaStream

object to which track is to be added.

-

Add track to

stream.

-

If the operation in the previous step was aborted prematurely,

then abort these steps.

-

Fire a track event named addtrack with

track at stream.

When the User Agent initiates removing a track from a

MediaStream, the User Agent MUST queue a task that

runs the following steps:

-

Let track be the MediaStreamTrack

in question and stream the MediaStream

object to which track is to be added.

-

Remove track from

stream.

-

If the operation in the previous step was aborted prematurely,

then abort these steps.

-

Fire a track event named removetrack with

track at stream.

- Constructor()

-

See the MediaStream constructor

algorithm

- Constructor(MediaStream stream)

-

See the MediaStream constructor

algorithm

- Constructor(sequence<MediaStreamTrack> tracks)

-

See the MediaStream constructor

algorithm

- readonly attribute DOMString id

-

When a MediaStream object is created, the User

Agent MUST generate an identifier string, and MUST initialize the

object's id attribute

to that string. A good practice is to use a UUID [[rfc4122]], which

is 36 characters long in its canonical form. To avoid fingerprinting,

implementations SHOULD use the forms in section 4.4 or 4.5 of RFC

4122 when generating UUIDs.

The id attribute MUST return the

value to which it was initialized when the object was created.

- sequence<MediaStreamTrack> getAudioTracks()

-

Returns a sequence of MediaStreamTrack objects

representing the audio tracks in this stream.

The getAudioTracks()

method MUST return a sequence that represents a snapshot of all the

MediaStreamTrack objects in this stream's

track set whose kind is equal to

"audio". The conversion from the track set to the sequence is User Agent defined and

the order does not have to be stable between calls.

- sequence<MediaStreamTrack> getVideoTracks()

-

Returns a sequence of MediaStreamTrack objects

representing the video tracks in this stream.

The getVideoTracks()

method MUST return a sequence that represents a snapshot of all the

MediaStreamTrack objects in this stream's

track set whose kind is equal to

"video". The conversion from the track set to the sequence is User Agent defined and

the order does not have to be stable between calls.

- sequence<MediaStreamTrack> getTracks()

-

Returns a sequence of MediaStreamTrack objects

representing all the tracks in this stream.

The getTracks() method

MUST return a sequence that represents a snapshot of all the

MediaStreamTrack objects in this stream's

track set, regardless of kind. The conversion from the

track set to the sequence is User Agent

defined and the order does not have to be stable between calls.

- MediaStreamTrack? getTrackById(DOMString trackId)

-

The getTrackById()

method MUST return either a MediaStreamTrack

object from this stream's track set whose

id is equal to

trackId, or null, if no such track exists.

- void addTrack(MediaStreamTrack track)

-

Adds the given MediaStreamTrack to this

MediaStream.

When the addTrack() method is

invoked, the User Agent MUST add the

track, specified by the method's first argument, to this

MediaStream.

- void removeTrack(MediaStreamTrack track)

-

Removes the given MediaStreamTrack object from

this MediaStream.

When the removeTrack() method

is invoked, the User Agent MUST remove

the track, specified by the method's first argument, from this

MediaStream.

- MediaStream clone()

-

Clones the given MediaStream and all its

tracks.

When the clone() method is invoked, the User Agent

MUST run the following steps:

-

Let streamClone be a newly constructed

MediaStream object.

-

Initialize streamClone's id attribute to a newly

generated value.

-

Clone each track in this

MediaStream object and add the result to

streamClone's track set.

- Return streamClone.

- readonly attribute boolean active

-

The active attribute MUST return true if

this MediaStream is active and false otherwise.

- attribute EventHandler onaddtrack

-

The event type of this event handler is addtrack.

- attribute EventHandler onremovetrack

-

The event type of this event handler is removetrack.

MediaStreamTrack

A MediaStreamTrack object represents a media

source in the User Agent. Several MediaStreamTrack

objects can represent the same media source, e.g., when the user chooses

the same camera in the UI shown by two consecutive calls to

getUserMedia() .

The data from a MediaStreamTrack object does not

necessarily have a canonical binary form; for example, it could just be

"the video currently coming from the user's video camera". This allows

User Agents to manipulate media in whatever fashion is most suitable on

the user's platform.

A script can indicate that a MediaStreamTrack

object no longer needs its source with the stop() method. When all tracks

using a source have been stopped or ended by some other means, the source

is stopped. Unless there is a stored

permission for the source in question, the given permission is revoked

and the User Agent SHOULD also remove the "permission granted" indicator

for the source. If the data is being generated from a live source (e.g.,

a microphone or camera), then the User Agent SHOULD remove any active

"on-air" indicator for that source. An implementation may use a

per-source reference count to keep track of source usage, but the

specifics are out of scope for this specification.

To clone a track the User Agent MUST run

the following steps:

-

Let track be the MediaStreamTrack

object to be cloned.

-

Let trackClone be a newly constructed

MediaStreamTrack object.

-

Initialize trackClone's id attribute to a newly

generated value.

-

Initialize trackClone's kind, label, readyState, and

enabled

attributes by copying the corresponding values from

track.

-

Let trackClone's underlying source be the source of

track.

-

Set trackClone's constraints to the active constrains

of track.

-

Return trackClone.

Life-cycle and Media Flow

Life-cycle

A MediaStreamTrack has two states in its

life-cycle: live and ended. A newly created

MediaStreamTrack can be in either state depending

on how it was created. For example, cloning an ended track results in a

new ended track. The current state is reflected by the object's

readyState

attribute.

In the live state, the track is active and media is

available for use by consumers (but may be replaced by

zero-information-content if the MediaStreamTrack is

muted or disabled, see below).

A muted or disabled MediaStreamTrack renders

either silence (audio), black frames (video), or a

zero-information-content equivalent. For example, a video element

sourced by a muted or disabled MediaStreamTrack

(contained within a MediaStream ), is playing but

the rendered content is the muted output. When all tracks connected to

a source are muted or disabled, the "on-air" or "recording" indicator

for that source can be turned off; when the track is no longer muted or

disabled, it MUST be turned back on.

The muted/unmuted state of a track reflects whether the source

provides any media at this moment. The enabled/disabled state is under

application control and determines whether the track outputs media (to

its consumers). Hence, media from the source only flows when a

MediaStreamTrack object is both unmuted and

enabled.

A MediaStreamTrack is muted when the source is temporarily unable to

provide the track with data. A track can be muted by a user. Often this

action is outside the control of the application. This could be as a

result of the user hitting a hardware switch or toggling a control in

the operating system / browser chrome. A track can also be muted by the

User Agent.

Applications are able to enable or

disable a MediaStreamTrack to prevent it from

rendering media from the source. A muted track will however, regardless

of the enabled state, render silence and blackness. A disabled track is

logically equivalent to a muted track, from a consumer point of

view.

For a newly created MediaStreamTrack object, the

following applies. The track is always enabled unless stated otherwise

(for example when cloned) and the muted state reflects the state of the

source at the time the track is created.

A MediaStreamTrack object is said to

end when the source of the track is disconnected or

exhausted.

If all MediaStreamTracks that are using the same

source are ended, the source will be

stopped.

When a MediaStreamTrack object ends for any

reason (e.g., because the user rescinds the permission for the page to

use the local camera, or because the application invoked the

stop() method on

the MediaStreamTrack object, or because the User

Agent has instructed the track to end for any reason) it is said to be

ended.

When a MediaStreamTrack track ends

for any reason other than the stop() method being invoked,

the User Agent MUST queue a task that runs the following steps:

-

If the track's readyState attribute

has the value ended already, then abort these

steps.

-

Set track's readyState attribute

to ended.

-

Notify track's source that track is

ended so that the source may be stopped, unless other

MediaStreamTrack objects depend on it.

-

Fire a simple event named ended at the object.

If the end of the stream was reached due to a user request, the

event source for this event is the user interaction event source.

Media Flow

There are two dimensions related to the media flow for a

live MediaStreamTrack : muted / not

muted, and enabled / disabled.

Muted refers to the input to the

MediaStreamTrack. If live samples are not made

available to the MediaStreamTrack it is muted.

Muted is out of control for the application, but can be observed by

the application by reading the muted attribute and listening

to the associated events mute and unmute. There can be

several reasons for a MediaStreamTrack to be muted:

the user pushing a physical mute button on the microphone, the user

toggling a control in the operating system, the user clicking a mute

button in the browser chrome, the User Agent (on behalf of the user)

mutes, etc.

To update a track's muted state

to newState, the User Agent MUST queue a task to run the

following steps:

-

Let track be the MediaStreamTrack in

question.

-

Set track's muted attribute to

newState.

-

If newState is true let

eventName be mute, otherwise

unmute.

-

Fire a simple event named eventName on

track.

Enabled/disabled on the other hand is

available to the application to control (and observe) via the

enabled

attribute.

The result for the consumer is the same in the sense that whenever

MediaStreamTrack is muted or disabled (or both) the

consumer gets zero-information-content, which means silence for audio

and black frames for video. In other words, media from the source only

flows when a MediaStreamTrack object is both

unmuted and enabled. For example, a video element sourced by a muted or

disabled MediaStreamTrack (contained in a

MediaStream ), is playing but rendering

blackness.

For a newly created MediaStreamTrack object, the

following applies: the track is always enabled unless stated otherwise

(for example when cloned) and the muted state reflects the state of the

source at the time the track is created.

Tracks and Constraints

Constraints are set on tracks and may affect sources.

Whether Constraints were provided at track

initialization time or need to be established later at runtime, the

APIs defined in the ConstrainablePattern Interface allow the

retrieval and manipulation of the constraints currently established on

a track.

If the overconstrained event is thrown, the

track MUST be muted until either new satisfiable constraints are

applied or the existing constraints become satisfiable.

Track Source Types

- camera

-

A valid source type only for video

MediaStreamTrack s. The source is a local

video-producing camera source.

- microphone

-

A valid source type only for audio

MediaStreamTrack s. The source is a local

audio-producing microphone source.

Constrainable Properties

The names of the initial set of constrainable properties for

MediaStreamTrack are defined below.

The following constrainable properties are defined to apply to both

video and audio MediaStreamTrack objects:

| Property Name |

Values |

Notes |

| sourceType |

SourceTypeEnum |

The type of the source of the MediaStreamTrack. Note

that the setting of this property is uniquely determined by the

source that is attached to the Track. In particular,

getCapabilities() will return only a single value for

sourceType. This property can therefore be used for initial

media selection with getUserMedia(). However, it is not

useful for subsequent media control with

applyConstraints(), since any attempt to set a different

value will result in an unsatisfiable ConstraintSet.

|

| deviceId |

DOMString |

The origin-unique identifier for the source of the

MediaStreamTrack. The same identifier MUST be valid

between browsing sessions of this origin, but MUST also be

different for other origins. Some sort of GUID is recommended

for the identifier. Note that the setting of this property is

uniquely determined by the source that is attached to the

Track. In particular, getCapabilities() will return only

a single value for deviceId. This property can therefore be

used for initial media selection with getUserMedia().

However, it is not useful for subsequent media control with

applyConstraints(), since any attempt to set a different

value will result in an unsatisfiable ConstraintSet.

|

| groupId |

DOMString |

The origin-unique group identifier for the source of the

MediaStreamTrack. Two devices have the same group

identifier if they belong to the same physical device; for

example, the audio input and output devices representing the

speaker and microphone of the same headset would have the same

groupId.

|

The following constrainable properties are defined to apply only to

video MediaStreamTrack objects:

| Property Name |

Values |

Notes |

| width |

ConstrainLong |

The width or width range, in pixels. As a capability, the

range should span the video source's pre-set width values with

min being the smallest width and max being the largest

width. |

| height |

ConstrainLong |

The height or height range, in pixels. As a capability, the

range should span the video source's pre-set height values with

min being the smallest height and max being the largest

height. |

| frameRate |

ConstrainDouble |

The exact frame rate (frames per second) or frame rate range.

If this frame rate cannot be determined (e.g. the source does not

natively provide a frame rate, or the frame rate cannot be

determined from the source stream), then this value MUST refer to

the User Agent's vsync display rate. |

| aspectRatio |

ConstrainDouble |

The exact aspect ratio (width in pixels divided by height in

pixels, represented as a double rounded to the tenth decimal

place) or aspect ratio range. |

| facingMode |

ConstrainDOMString |

This string (or each string, when a list) should be one of

the members of VideoFacingModeEnum. The

members describe the directions that the camera can face, as seen

from the user's perspective. Note that

getConstraints may not return exactly the

same string for strings not in this enum. This preserves the

possibility of using a future version of WebIDL enum for this

property. |

- user

-

The source is facing toward the user (a self-view camera).

- environment

-

The source is facing away from the user (viewing the

environment).

- left

-

The source is facing to the left of the user.

- right

-

The source is facing to the right of the user.

Below is an illustration of the video facing modes in relation to

the user.

The following constrainable properties are defined to apply only to

audio MediaStreamTrack objects:

| Property Name |

Values |

Notes |

| volume |

ConstrainDouble |

The volume or volume range, as a multiplier of the linear

audio sample values. A volume of 0.0 is silence, while a volume

of 1.0 is the maximum supported volume. A volume of 0.5 will

result in an approximately 6 dBSPL change in the sound

pressure level from the maximum volume. Note that any

ConstraintSet that specifies values outside of this range of 0 to

1 can never be satisfied. |

| sampleRate |

ConstrainLong |

The sample rate in samples per second for the audio

data. |

| sampleSize |

ConstrainLong |

The linear sample size in bits. This constraint can only be

satisfied for audio devices that produce linear samples. |

| echoCancellation |

ConstrainBoolean |

When one or more audio streams is being played in the

processes of various microphones, it is often desirable to

attempt to remove the sound being played from the input signals

recorded by the microphones. This is referred to as echo

cancellation. There are cases where it is not needed and it is

desirable to turn it off so that no audio artifacts are

introduced. This allows applications to control this

behavior. |

| latency |

ConstrainDouble |

The latency or latency range, in seconds. The latency is the

time between start of processing (for instance, when sound occurs

in the real world) to the data being available to the next step

in the process. Low latency is critical for some applications;

high latency may be acceptable for other applications because it

helps with power constraints. The number is expected to be the

target latency of the configuration; the actual latency may show

some variation from that. |

| channelCount |

ConstrainLong |

The number of independent channels of sound that the audio

data contains, i.e. the number of audio samples per sample

frame. |

MediaStreamTrackEvent

The addtrack

and removetrack events use the

MediaStreamTrackEvent interface.

The addtrack and removetrack events notify

the script that the track set of a

MediaStream has been updated by the User Agent.

Firing a track event named

e with a MediaStreamTrack

track means that an event with the name e, which

does not bubble (except where otherwise stated) and is not cancelable

(except where otherwise stated), and which uses the

MediaStreamTrackEvent interface with the

track

attribute set to track, MUST be created and dispatched at the

given target.

- Constructor(DOMString type, MediaStreamTrackEventInit

eventInitDict)

-

Constructs a new MediaStreamTrackEvent.

- [SameObject] readonly attribute MediaStreamTrack track

-

The track attribute

represents the MediaStreamTrack object associated

with the event.

- required MediaStreamTrack track

The model: sources, sinks, constraints, and settings

Browsers provide a media pipeline from sources to sinks. In a browser,

sinks are the <img>, <video>, and <audio> tags.

Traditional sources include streamed content, files, and web resources. The

media produced by these sources typically does not change over time - these

sources can be considered to be static.

The sinks that display these sources to the user (the actual tags

themselves) have a variety of controls for manipulating the source content.

For example, an <img> tag scales down a huge source image of

1600x1200 pixels to fit in a rectangle defined with

width="400" and height="300".

The getUserMedia API adds dynamic sources such as microphones and

cameras - the characteristics of these sources can change in response to

application needs. These sources can be considered to be dynamic in nature.

A <video> element that displays media from a dynamic source can

either perform scaling or it can feed back information along the media

pipeline and have the source produce content more suitable for display.

Note: This sort of feedback loop is obviously just

enabling an "optimization", but it's a non-trivial gain. This

optimization can save battery, allow for less network congestion,

etc...

Note that MediaStream sinks (such as

<video>, <audio>, and even

RTCPeerConnection) will continue to have mechanisms to further

transform the source stream beyond that which the Settings,

Capabilities, and Constraints described in this specification

offer. (The sink transformation options, including those of

RTCPeerConnection, are outside the scope of this

specification.)

The act of changing or applying a track constraint may affect the

settings of all tracks sharing that source and

consequently all down-level sinks that are using that source. Many sinks

may be able to take these changes in stride, such as the

<video> element or RTCPeerConnection.

Others like the Recorder API may fail as a result of a source setting

change.

The RTCPeerConnection is an interesting object because it

acts simultaneously as both a sink and a source for

over-the-network streams. As a sink, it has source transformational

capabilities (e.g., lowering bit-rates, scaling-up / down resolutions, and

adjusting frame-rates), and as a source it could have its own settings

changed by a track source (though in this specification sources with the

remote attribute set to true do not consider the

current constraints applied to a track).

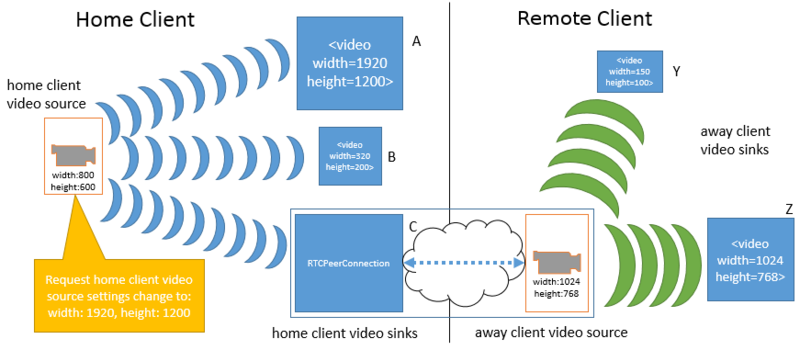

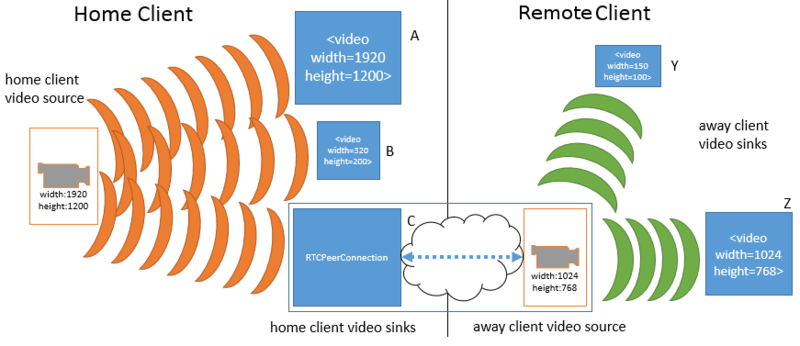

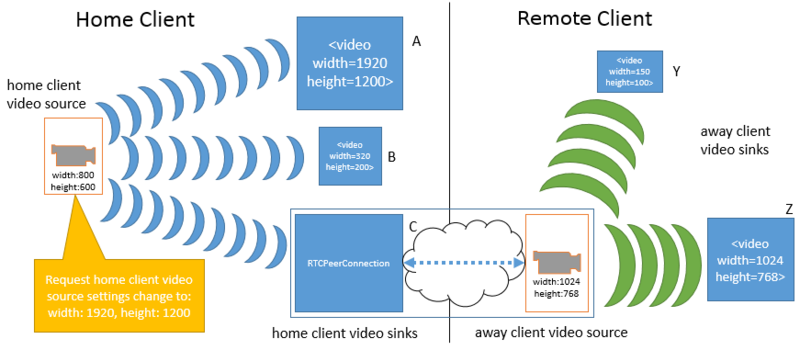

To illustrate how changes to a given source impact various sinks,

consider the following example. This example only uses width and height,

but the same principles apply to all of the Settings exposed in this

specification. In the first figure a home client has obtained a video

source from its local video camera. The source's width and height settings

are 800 pixels and 600 pixels, respectively. Three

MediaStream objects on the home client contain tracks

that use this same deviceId. The three media streams

are connected to three different sinks: a <video>

element (A), another <video> element (B), and a peer

connection (C). The peer connection is streaming the source video to a

remote client. On the remote client there are two media streams with tracks

that use the peer connection as a source. These two media streams are

connected to two <video> element sinks (Y and

Z).

Note that at this moment, all of the sinks on the home client must apply

a transformation to the original source's provided dimension settings. B is

scaling the video down, A is scaling the video up (resulting in loss of

quality), and C is also scaling the video up slightly for sending over the

network. On the remote client, sink Y is scaling the video way

down, while sink Z is not applying any scaling.

In response to applyConstraints() being called, one

of the tracks wants a higher resolution (1920 by 1200 pixels) from the home

client's video source.

Note that the source change immediately affects all of the tracks and

sinks on the home client, but does not impact any of the sinks (or sources)

on the remote client. With the increase in the home client source video's

dimensions, sink A no longer has to perform any scaling, while sink B must

scale down even further than before. Sink C (the peer connection) must now

scale down the video in order to keep the transmission constant to the

remote client.

While not shown, an equally valid settings change request could be made

on the remote client's side. In addition to impacting sink Y and Z in the

same manner as A, B and C were impacted earlier, it could lead to

re-negotiation with the peer connection on the home client in order to

alter the transformation that it is applying to the home client's video

source. Such a change is NOT REQUIRED to change anything related to sink A

or B or the home client's video source.

Note that this specification does not define a mechanism by which a

change to the remote client's video source could automatically trigger a

change to the home client's video source. Implementations may choose to

make such source-to-sink optimizations as long as they only do so within

the constraints established by the application, as the next example

demonstrates.

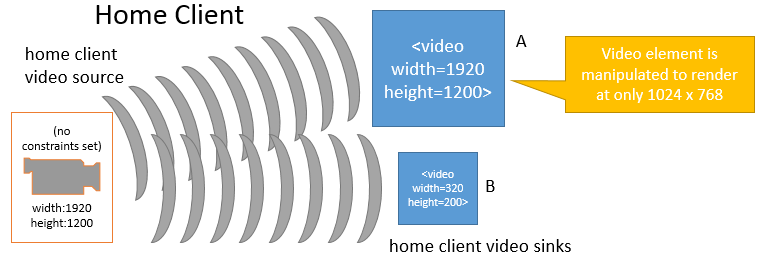

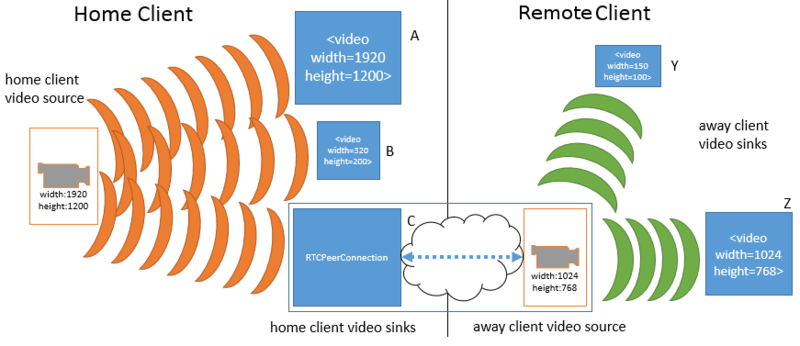

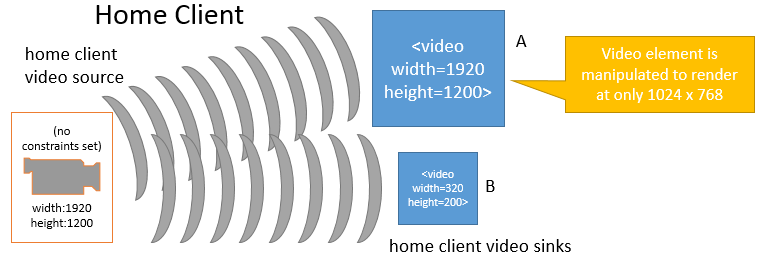

It is fairly obvious that changes to a given source will impact sink

consumers. However, in some situations changes to a given sink may also

cause implementations to adjust a source's settings. This is illustrated in

the following figures. In the first figure below, the home client's video

source is sending a video stream sized at 1920 by 1200 pixels. The video

source is also unconstrained, such that the exact source dimensions are

flexible as far as the application is concerned. Two

MediaStream objects contain tracks with the same

deviceId, and those MediaStream s

are connected to two different <video> element sinks A

and B. Sink A has been sized to width="1920" and

height="1200" and is displaying the source's video content

without any transformations. Sink B has been sized smaller and, as a

result, is scaling the video down to fit its rectangle of 320 pixels across

by 200 pixels down.

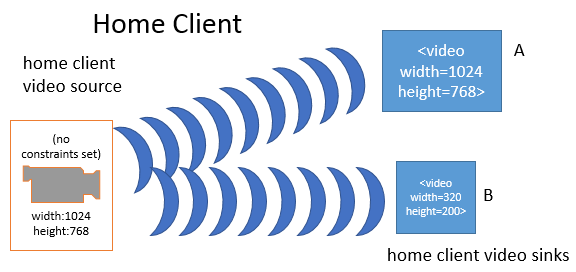

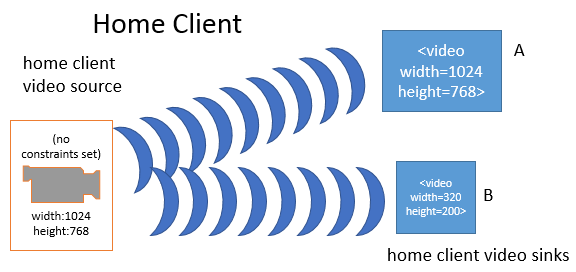

When the application changes sink A to a smaller dimension (from 1920 to

1024 pixels wide and from 1200 to 768 pixels tall), the browser's media

pipeline may recognize that none of its sinks require the higher source

resolution, and needless work is being done both on the part of the source

and sink A. In such a case and without any other constraints forcing the

source to continue producing the higher resolution video, the media

pipeline MAY change the source resolution:

In the above figure, the home client's video source resolution was

changed to the greater of that from sink A and B in order to optimize

playback. While not shown above, the same behavior could apply to peer

connections and other sinks.

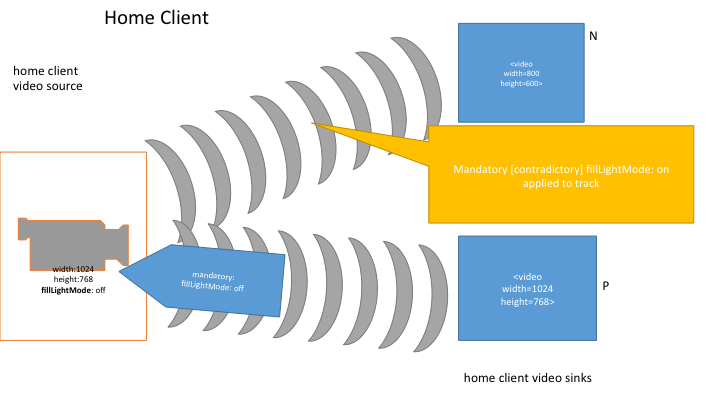

It is possible that constraints can be applied to a track which a

source is unable to satisfy, either because the source itself cannot

satisfy the constraint or because the source is already satisfying a

conflicting constraint. When this happens, the promise returned from

applyConstraints() will be rejected, without applying

any of the new constraints. Since no change in constraints occurs in this

case, there is also no required change to the source itself as a result of

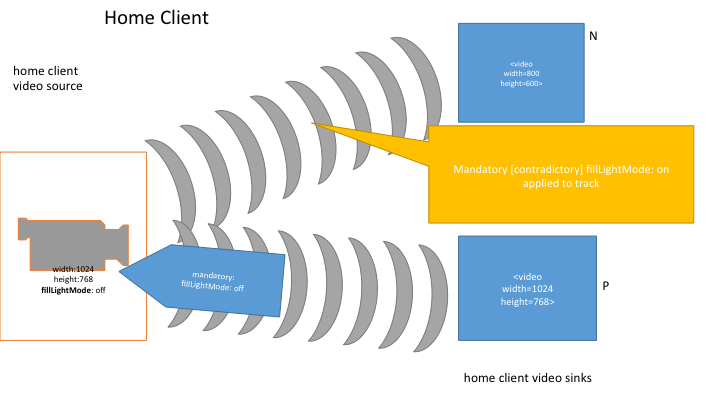

this condition. Here is an example of this behavior.

In this example, two media streams each have a video track that share

the same source. The first track initially has no constraints applied. It

is connected to sink N. Sink N has a resolution of 800 by 600 pixels and is

scaling down the source's resolution of 1024 by 768 to fit. The other track

has a mandatory constraint forcing off the source's fill light; it is

connected to sink P. Sink P has a width and height equal to that of the

source.

Now, the first track adds a mandatory constraint that the fill light

should be forced on. At this point, both mandatory constraints cannot be

satisfied by the source (the fill light cannot be simultaneously on and off

at the same time). Since this state was caused by the first track's attempt

to apply a conflicting constraint, the constraint application fails and

there is no change in the source's settings nor to the constraints on

either track.

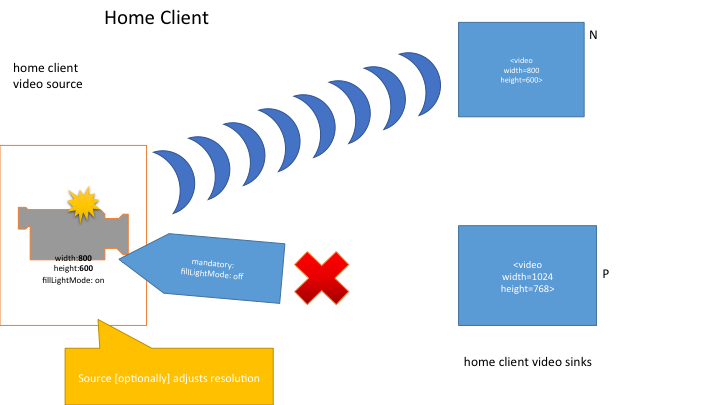

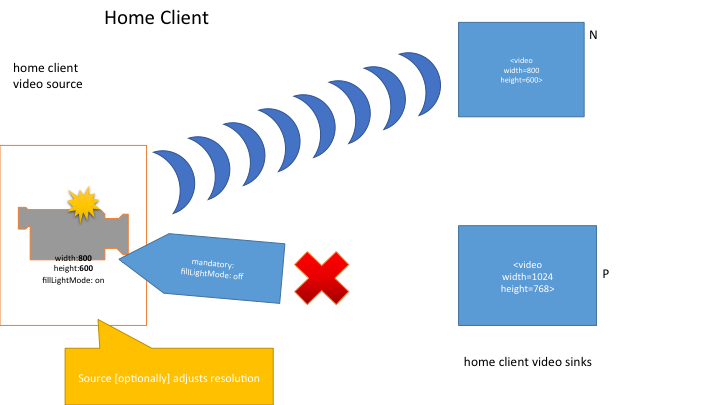

Let's look at a slightly different situation starting from the same

point. In this case, instead of the first track attempting to apply a

conflicting constraint, the user physically locks the camera into a mode

where the fill light is on. At this point the source can no longer satisfy

the second track's mandatory constraint that the fill light be off. The

second track is transitioned into the muted state and receives an

overconstrained event. At the same time, the source notes that its

remaining active sink only requires a resolution of 800 by 600 and so it

adjusts its resolution down to match (this is an optional optimization that

the User Agent is allowed to make given the situation).

At this point, it is the responsibility of the application to address

the problem that led to the overconstrained situation, perhaps by removing

the fill light mandatory constraint on the second track or by closing the

second track altogether and informing the user.

MediaStreams in Media Elements

We are now referencing the [[!HTML51]] specification for MediaStream

playback in a HTMLMediaElement. This section, that defines

MediaStream-specific exceptions and additions, is still work in progress

and feedback is most welcome.

A MediaStream may be assigned to media elements. A

MediaStream is not preloadable or seekable and represents a

simple, potentially infinite, linear media timeline. The timeline starts at

0 and increments linearly in real time as long as the

MediaStream is playing. The timeline does not increment when

the playout of the MediaStream is paused.

User Agents that support this specification MUST support the

srcObject attribute of the HTMLMediaElement

interface defined in [[!HTML51]], which includes support for playing

MediaStream objects.

The [[!HTML51]] document outlines how the HTMLMediaElement

works with a media provider object. The following applies when the

media provider object is a MediaStream:

-

Whenever an [[!HTML51]]

AudioTrack or a

VideoTrack is created, the id and

label attributes must be initilized to the corresponding

attributes of the MediaStreamTrack, the kind

attribute must be initiliszed to "main" and the language

attribute to the empty string

- The User Agent MUST always play the current data from the

MediaStream and MUST NOT buffer.

-

Since the order in the MediaStream 's track set is undefined, no requirements are put on how

the

AudioTrackList and

VideoTrackList is ordered

-

When the MediaStream state moves from the active

to the inactive state, the User Agent

MUST raise an

ended event on the HTMLMediaElement and set its

ended attribute to true. Note that once

ended equals true the HTMLMediaElement

will not play media even if new MediaStreamTrack's

are added to the MediaStream (causing it to return

to the active state) unless autoplay is true

or the web application restarts the element, e.g., by calling

play()

-

Any calls to the

fastSeek method on a HTMLMediaElement must be

ignored

The nature of the MediaStream places certain

restrictions on the behavior and attribute values of the associated

HTMLMediaElement and on the operations that can be performed

on it, as shown below:

| Attribute Name |

Attribute Type |

Valid Values When Using a MediaStream |

Additional considerations |

preload

|

DOMString |

On getting: none. On setting: ignored. |

A MediaStream cannot be preloaded. |

buffered

|

TimeRanges

|

buffered.length MUST return 0. |

A MediaStream cannot be preloaded. Therefore, the amount buffered

is always an empty TimeRange. |

currentTime

|

double |

Any non-negative integer. The initial value is 0 and the values

increments linearly in real time whenever the stream is playing. |

The value is the current stream position, in seconds. On any

attempt to set this attribute, the User Agent must throw an

InvalidStateError exception. |

seeking

|

boolean |

false |

A MediaStream is not seekable. Therefore, this attribute MUST

always have the value false. |

defaultPlaybackRate

|

double |

On setting: ignored. On getting: return 1.0 |

A MediaStream is not seekable. Therefore, this attribute MUST

always have the value 1.0 and any attempt to alter it

MUST be ignored. Note that this also means that the

ratechange event will not fire. |

playbackRate

|

double |

1.0 |

A MediaStream is not seekable. Therefore, this attribute MUST

always have the value 1.0 and any attempt to alter it

MUST be ignored. Note that this also means that the

ratechange event will not fire. |

played

|

TimeRanges

|

played.length MUST return 1.

played.start(0) MUST return 0.

played.end(0) MUST return the last known

currentTime .

|

A MediaStream's timeline always consists of a

single range, starting at 0 and extending up to the currentTime. |

seekable

|

TimeRanges

|

seekable.length MUST return 0. |

A MediaStream is not seekable. |

loop

|

boolean |

true, false |

Setting the loop attribute has no effect since a

MediaStream has no defined end and therefore

cannot be looped. |

When applicable, behavior outlined above for

HTMLMediaElement carry over to

MediaController's.

Error Handling

This section and its subsections extend the list of Error subclasses

defined in [[!ES6]] following the pattern for NativeError in section 19.5.6

of that specification. Assume the following:

- that use of syntax such as [[\something]] and %something% is as used

in [[!ES6]].

- that the rules for ECMAScript standard built-in objects ([[!ES6]],

section 17) are in effect in this section.

- that the new intrinsic objects %OverconstrainedError% and

%OverconstrainedErrorPrototype% are available as if they had been

included in ([[!ES6]], Table 7) and all referencing sections, e.g.

([[!ES6]], section 8.2.2), thus behave appropriately.

ECMAScript 6 Terminology

The following terms used in this section are defined in [[!ES6]].

| Term/Notation |

Section in [[!ES6]] |

| Type(X) |

6 |

| intrinsic object |

6.1.7.4 |

| [[\ErrorData]] |

19.5.1 |

| internal slot |

6.1.7.2 |

| NewTarget |

various uses, but no definition |

| active function object |

8.3 |

| OrdinaryCreateFromConstructor() |

9.1.14 |

| ReturnIfAbrupt() |

6.2.2.4 |

| Assert |

5.2 |

| String |

4.3.17-19, depending on context |

| PropertyDescriptor |

6.2.4 |

| [[\Value]] |

6.1.7.1 |

| [[\Writable]] |

6.1.7.1 |

| [[\Enumerable]] |

6.1.7.1 |

| [[\Configurable]] |

6.1.7.1 |

| DefinePropertyOrThrow() |

7.3.7 |

| abrupt completion |

6.2.2 |

| ToString() |

7.1.12 |

| [[\Prototype]] |

9.1 |

| %Error% |

19.5.1 |

| Error |

19.5 |

| %ErrorPrototype% |

19.5.3 |

| Object.prototype.toString |

19.1.3.6 |

OverconstrainedError Object

OverconstrainedError Constructor

The OverconstrainedError Constructor is the %OverconstrainedError%

intrinsic object. When OverconstrainedError is called as a

function rather than as a constructor, it creates and initializes a new

OverconstrainedError object. A call of the object as a function is

equivalent to calling it as a constructor with the same arguments. Thus

the function call OverconstrainedError(...)

is equivalent to the object creation expression new

OverconstrainedError(...) with the same

arguments.

The OverconstrainedError constructor is designed to be

subclassable. It may be used as the value of an extends

clause of a class definition. Subclass constructors that intend to

inherit the specified OverconstrainedError behaviour must

include a super call to the

OverconstrainedError constructor to create and initialize

the subclass instance with an [[\ErrorData]] internal slot.

OverconstrainedError ( constraint, message )

When the OverconstrainedError function is

called with arguments constraint and message the

following steps are taken:

- If NewTarget is undefined, let newTarget be the

active function object, else let newTarget be

NewTarget.

- Let O be OrdinaryCreateFromConstructor(newTarget,

"%OverconstrainedErrorPrototype%", «[[\ErrorData]]»

).

- ReturnIfAbrupt(O).

- If constraint is not undefined, then

- Let constraintDesc be the

PropertyDescriptor{[[\Value]]: constraint,

[[\Writable]]: false, [[\Enumerable]]: false,

[[\Configurable]]: false}.

- Let cStatus be DefinePropertyOrThrow(O,

"

constraint", constraintDesc).

- Assert: cStatus is not an abrupt completion.

- If message is not undefined, then

- Let msg be ToString(message).

- Let msgDesc be the PropertyDescriptor{[[\Value]]:

msg, [[\Writable]]: true, [[\Enumerable]]:

false, [[\Configurable]]: true}.

- Let mStatus be DefinePropertyOrThrow(O,

"

message", msgDesc).

- Assert: mStatus is not an abrupt completion.

- Return O.

Properties of the OverconstrainedError Constructor

The value of the [[\Prototype]] internal slot of the

OverconstrainedError constructor is the intrinsic object %Error%.

Besides the length property (whose value is 1),

the OverconstrainedError constructor has the following properties:

OverconstrainedError.prototype

The initial value of OverconstrainedError.prototype

is the OverconstrainedError

prototype object. This property has the attributes {

[[\Writable]]: false, [[\Enumerable]]: false,

[[\Configurable]]: false }.

Properties of the OverconstrainedError Prototype Object

The OverconstrainedError prototype object is an ordinary object. It

is not an Error instance and does not have an [[\ErrorData]] internal

slot.

The value of the [[\Prototype]] internal slot of the

OverconstrainedError prototype object is the intrinsic object

%ErrorPrototype%.

OverconstrainedError.prototype.constructor

The initial value of the constructor property of the prototype for

the OverconstrainedError constructor is the intrinsic object %OverconstrainedError%.

OverconstrainedError.prototype.constraint

The initial value of the constraint property of the prototype for

the OverconstrainedError constructor is the empty String.

OverconstrainedError.prototype.message

The initial value of the message property of the prototype for the

OverconstrainedError constructor is the empty String.

OverconstrainedError.prototype.name

The initial value of the name property of the prototype for the

OverconstrainedError constructor is

"OverconstrainedError".

Properties of OverconstrainedError Instances

OverconstrainedError instances are ordinary objects that inherit

properties from the OverconstrainedError prototype object and have an

[[\ErrorData]] internal slot whose value is undefined. The only

specified use of [[\ErrorData]] is by Object.prototype.toString

([[!ES6]], section 19.1.3.6) to identify instances of Error or its

various subclasses.

The following will need to be updated when we finish out the error

definitions.

The following interface is defined for cases when an

OverconstrainedError is raised as an event:

- Constructor(DOMString type, OverconstrainedErrorEventInit

eventInitDict)

-

Constructs a new

OverconstrainedErrorEvent.

- readonly attribute OverconstrainedError? error

-

The OverconstrainedError describing the error

that triggered the event (if any).

- OverconstrainedError? error = null

-

The OverconstrainedError describing the error

associated with the event (if any)

ErrorEvent

The following interface is defined for cases when an event is raised

that could have been caused by an error:

Firing an error event named

e with an Error error means that

an event with the name e, which does not bubble (except where

otherwise stated) and is not cancelable (except where otherwise stated),

and which uses the ErrorEvent interface with the

error attribute set to

error, MUST be created and dispatched at the given target. If

no Error object is specified, the error attribute defaults to null.

- Constructor(DOMString type, ErrorEventInit eventInitDict)

-

Constructs a new ErrorEvent.

- readonly attribute Error? error

-

If the event was raised because of an error, this attribute may be

set to that error object.

- Error? error = null

-

If the event was raised because of an error, this attribute may be

set to that error object.

Error names

There is a known issue with this table of error names,

because MediaStreamError has been removed but is still

needed in the legacy, callback-based getUserMedia interface. This issue

will be fixed through resolution of PR #216.

The table below lists the error names defined in this

specification.

Error names

| Name |

Description |

Note |

OverconstrainedError |

One of the mandatory Constraints could not be satisfied.

|

The constraint attribute is set to the name of any

one of the constraints that caused the error, if any, as

described in the SelectSettings algorithm

|

Enumerating Local Media Devices

This section describes an API that the script can use to query the User

Agent about connected media input and output devices (for example a web

camera or a headset).

NavigatorUserMedia

- [SameObject] readonly attribute MediaDevices mediaDevices

-

Returns the MediaDevices object associated with this

Navigator object.

MediaDevices

The MediaDevices object is the entry point to the API

used to examine and get access to media devices available to the User

Agent.

When a new media input or output device is made available, the User

Agent MUST queue a task that fires a simple event named devicechange at the

MediaDevices object.

- attribute EventHandler ondevicechange

-

The event type of this event handler is devicechange.

- Promise<sequence<MediaDeviceInfo>> enumerateDevices

()

-

Collects information about the User Agent's available media input

and output devices.

This method returns a promise. The promise will be fulfilled with

a sequence of MediaDeviceInfo dictionaries

representing the User Agent's available media input and output

devices if enumeration is successful.

Elements of this sequence that represent input devices will be of

type InputDeviceInfo which extends

MediaDeviceInfo.

Camera and microphone sources should be

enumerable. Specifications that add additional types of source will

provide recommendations about whether the source type should be

enumerable.

When the enumerateDevices()

method is called, the User Agent must run the following steps:

-

Let p be a new promise.

-

Run the following steps in parallel:

-

Let resultList be an empty list.

-

If this method has been called previously within this

browsing session, let oldList be the list of

MediaDeviceInfo objects that was produced

at that call (resultList); otherwise, let

oldList be an empty list.

-

Probe the User Agent for available media devices, and run

the following sub steps for each discovered device,

device:

-

If device is represented by a

MediaDeviceInfo object in

oldList, append that object to

resultList, abort these steps and continue

with the next device (if any).

-

Let deviceInfo be a new

MediaDeviceInfo object to represent

device.

-

If device belongs to the same physical

device as a device already represented in

oldList or resultList, initialize

deviceInfo's groupId member

to the groupId value

of the existing MediaDeviceInfo

object. Otherwise, let deviceInfo's

groupId member

be a newly generated unique identifier.

-

Append deviceInfo to

resultList.

-

If none of the local devices are attached to an active

MediaStreamTrack in the current browsing

context, and if no persistent

permission to access these local devices has been granted

to the page's origin, let filteredList be a copy

of resultList, and all its elements, where the

label

member is the empty string.

-

If filteredList is a non-empty list, then

resolve p with filteredList. Otherwise,

resolve p with resultList.

-

Return p.

Since this method returns persistent

information across browsing sessions and origins via the number and

grouping of media capture devices, it adds to the fingerprinting

surface exposed by the user agent.

Once authorization has been granted to one of

the capture devices, it provides additional persistent cross-origin

information via the human readable labels associated with available

capture devices, which furhter adds to the fingerprinting

surface.

Access control model

The algorithm described above means that the access to media device

information depends on whether or not permission has been granted to

the page's origin to use media devices.

If no such access has been granted, the

MediaDeviceInfo dictionary will contain the

deviceId, kind, and groupId.

If access has been granted for a media device, the

MediaDeviceInfo dictionary will contain the

deviceId, kind, label, and groupId.

Device Info

- readonly attribute DOMString deviceId

-

A unique identifier for the represented device.

All enumerable devices have an identifier that MUST be unique to

the page's origin. As long as no local device has been attached to an

active MediaStreamTrack in a page from this origin, and no persistent permission to access local

devices has been granted to this origin, then this identifier MUST

NOT be reusable after the end of the current browsing session.

However, if any local devices have been attached to an active

MediaStreamTrack in a page from this origin, or persistent permission to access local

devices has been granted to this origin, then this identifier MUST be

persisted across browsing sessions.

Unique and stable identifiers let the application save, identify

the availability of, and directly request specific sources.

This identifier MUST be un-guessable by applications of other

origins to prevent the identifier being used to correlate the same

user across different origins.

Since deviceId may persist across

browsing sessions and to reduce its potential as a fingerprinting

mechanism, deviceId is to be treated as other persistent

storage mechanisms such as cookies [[COOKIES]]. User agents MUST

reset per-origin device identifiers when other persistent storages

are cleared.

- readonly attribute MediaDeviceKind kind

-

Describes the kind of the represented device.

- readonly attribute DOMString label

-

A label describing this device (for example "External USB

Webcam"). If the device has no associated label, then this attribute

MUST return the empty string.

- readonly attribute DOMString groupId

-

Returns the group identifier of the represented device. Two

devices have the same group identifier if they belong to the same

physical device; for example a monitor with a built-in camera and

microphone.

Since groupId may persist across

browsing sessions and to reduce its potential as a fingerprinting

mechanism, groupId is to be treated as other persistent

storage mechanisms such as cookies [[COOKIES]]. User agents MUST

reset per-origin device identifiers when other persistent storages

are cleared.

- serializer = { attribute }

- audioinput

-

Represents an audio input device; for example a microphone.

- audiooutput

-

Represents an audio output device; for example a pair of

headphones.

- videoinput

-

Represents a video input device; for example a webcam.

Input-specific Device Info

- MediaTrackCapabilities getCapabilities()

-

Returns a MediaTrackCapabilities object

describing the primary audio or video track of a device's

MediaStream (according to its kind

value), in the absence of any user-supplied constraints. These

capabilities MUST be identical to those that would have been obtained

by calling getCapabilities() on the first

MediaStreamTrack of this type in a

MediaStream returned by

getUserMedia({deviceId: id}) where id is

the value of the deviceId attribute of this

MediaDeviceInfo.

If no access has been granted to any local devices and this

InputDeviceInfo has been filtered with respect to unique

identifying information (see above description of

enumerateDevices() result), then this method returns

null.

Obtaining local multimedia content

This section extends NavigatorUserMedia and

MediaDevices with APIs to request permission to access

media input devices available to the User Agent.

When on an insecure origin [[mixed-content]], User Agents are encouraged

to warn about usage of navigator.mediaDevices.getUserMedia,

navigator.getUserMedia, and any prefixed variants in their

developer tools, error logs, etc. It is explicitly permitted for User

Agents to remove these APIs entirely when on an insecure origin, as long as

they remove all of them at once (e.g., they should not leave just the

prefixed version available on insecure origins).

NavigatorUserMedia Interface Extensions

The definition of getUserMedia() in this section reflects two major

changes from the method definition that has existed here for many

months.

First, the official definition for the getUserMedia() method, and

the one which developers are encouraged to use, is now at MediaDevices. This decision

reflected consensus as long as the original API remained available here

under the Navigator object for backwards compatibility reasons, since

the working group acknowledges that early users of these APIs have been

encouraged to define getUserMedia as "var getUserMedia =

navigator.getUserMedia || navigator.webkitGetUserMedia ||

navigator.mozGetUserMedia;" in order for their code to be functional

both before and after official implementations of getUserMedia() in

popular browsers. To ensure functional equivalence, the getUserMedia()

method here is defined in terms of the method under MediaDevices.

Second, the decision to change all other callback-based methods in

the specification to be based on Promises instead required that the

navigator.getUserMedia() definition reflect this in its use of

navigator.mediaDevices.getUserMedia(). Because navigator.getUserMedia()

is now the only callback-based method remaining in the specification,

there is ongoing discussion as to a) whether it still belongs in the

specification, and b) if it does, whether its syntax should remain

callback-based or change in some way to use Promises. Input on these

questions is encouraged, particularly from developers actively using

today's implementations of this functionality.

Note that the other methods that changed from a callback-based

syntax to a Promises-based syntax were not considered to have been

implemented widely enough in any form to have to consider legacy

usage.

- void getUserMedia(MediaStreamConstraints constraints,

NavigatorUserMediaSuccessCallback successCallback,

NavigatorUserMediaErrorCallback errorCallback)

-

Prompts the user for permission to use their Web cam or other

video or audio input.

The constraints argument is a dictionary of type

MediaStreamConstraints.

The successCallback will be invoked with a suitable

MediaStream object as its argument if the user

accepts valid tracks as described in getUserMedia() on

MediaDevices.

The errorCallback will be invoked if there is a failure

in finding valid tracks or if the user denies permission, as

described in getUserMedia() on

MediaDevices.

When the getUserMedia() method is

called, the User Agent MUST run the following steps:

-

Let constraints be the method's first argument.

-

Let successCallback be the callback indicated by

the method's second argument.

-

Let errorCallback be the callback indicated by the

method's third argument.

-

Run the steps specified by the getUserMedia() algorithm

with constraints as the argument, and let p

be the resulting promise.

-

Upon fulfillment of p with value stream,

run the following step:

-

Invoke successCallback with stream

as the argument.

-

Upon rejection of p with reason r, run

the following step:

-

Invoke errorCallback with r as the

argument.

MediaDevices Interface Extensions

The definition of getUserMedia() in this section reflects two major

changes from the method definition that has existed under

NavigatorUserMedia for many months.

First, the official definition for the getUserMedia() method, and

the one which developers are encouraged to use, is now the one defined

here under MediaDevices. This decision reflected consensus as long as

the original API remained available at NavigatorUserMedia.getUserMedia()

under the Navigator object for backwards compatibility reasons, since

the working group acknowledges that early users of these APIs have been

encouraged to define getUserMedia as "var getUserMedia =

navigator.getUserMedia || navigator.webkitGetUserMedia ||

navigator.mozGetUserMedia;" in order for their code to be functional

both before and after official implementations of getUserMedia() in

popular browsers. To ensure functional equivalence, the getUserMedia()

method under NavigatorUserMedia is defined in terms of the method

here.

Second, the method defined here is Promises-based, while the one

defined under NavigatorUserMedia is currently still callback-based.

Developers expecting to find getUserMedia() defined under

NavigatorUserMedia are strongly encouraged to read the detailed Note

given there.

The getSupportedConstraints method is provided to allow

the application to determine which constraints the User Agent

recognizes.

- MediaTrackSupportedConstraints getSupportedConstraints()

-

Returns a dictionary whose members are the constrainable

properties known to the User Agent. A supported constrainable

property MUST be represented and any constrainable properties not

supported by the User Agent MUST NOT be present in the returned

dictionary. The values returned represent what the browser implements

and will not change during a browsing session.

- Promise<MediaStream> getUserMedia( MediaStreamConstraints

constraints)

-

Prompts the user for permission to use their Web cam or other

video or audio input.

The constraints argument is a dictionary of type

MediaStreamConstraints.

This method returns a promise. The promise will be fulfilled with

a suitable MediaStream object if the user accepts

valid tracks as described below.

The promise will be rejected if there is a failure in finding

valid tracks or if the user denies permission, as described

below.

When the getUserMedia()

method is called, the User Agent MUST run the following steps:

-

Let constraints be the method's first argument.

-

Let requestedMediaTypes be the set of media types

in constraints with either a dictionary value or a

value of "true".

-

If requestedMediaTypes is the empty set, return a

promise rejected with a TypeError.

-

Let p be a new promise.

-

Run the following steps in parallel:

-

Let finalSet be an (initially) empty set.

-

For each media type T in

requestedMediaTypes,

-

For each possible source for that media type,

construct an unconstrained MediaStreamTrack with that

source as its source.

Call this set of tracks the

candidateSet.

If candidateSet is the empty set, reject

p with a new DOMException

object whose name attribute has the

value NotFoundError and abort these

steps.

- If the value of the T entry of

constraints is "true", set CS to the empty

constraint set (no constraint). Otherwise, continue with

CS set to the value of the T entry of

constraints.

-

Run the SelectSettings algorithm

on each track in CandidateSet with

CS as the constraint set. If the algorithm

does not return undefined, add the track to

finalSet. This eliminates devices unable to

satisfy the constraints, by verifying that at least one

settings dictionary exists that satisfies the

constraints.

If finalSet is the empty set, let

constraint be any required constraint whose

fitness distance was infinity for all settings dictionaries

examined while executing the

SelectSettings algorithm, let

message be either undefined or an

informative human-readable message, and reject p

with a new OverconstrainedError created

by calling OverconstrainedError(constraint,

message), then abort these steps.

This error gives information about

what the underlying device is not capable of producing,

before the user has given any authorization to any device,

and can thus be used as a fingerprinting surface.

-

Optionally, e.g., based on a previously-established user

preference, for security reasons, or due to platform

limitations, jump to the step labeled Permission

Failure below.

-

Let originIdentifier be the [[!HTML51]]

top-level browsing context's origin.

-

If the current [[!HTML51]]

browsing context is a [[!HTML51]]

nested browsing context whose origin is different from

originIdentifier, let originIdentifier

be the result of combining originIdentifier and

the current

browsing context's origin.

-

Prompt the user in a User Agent specific manner for

permission to provide the origin, identified by

originIdentifier, with a

MediaStream object representing a media

stream.

The provided media MUST include precisely one track of

each media type in requestedMediaTypes from the

finalSet. The decision of which tracks to choose

from the finalSet is completely up to the User

Agent and may be determined by asking the user. Once

selected, the source of a

MediaStreamTrack MUST NOT change.

The User Agent MAY use the value of the computed "fitness

distance" from the SelectSettings algorithm, or any

other internally-available information about the devices, as

an input to the selection algorithm.

User Agents are encouraged to default to using the user's

primary or system default camera and/or microphone (when

possible) to generate the media stream. User Agents MAY allow

users to use any media source, including pre-recorded media

files.

If the user grants permission to use local recording

devices, User Agents are encouraged to include a prominent

indicator that the devices are "hot" (i.e. an "on-air" or

"recording" indicator), as well as a "device accessible"

indicator indicating that the page has been granted access to

the source.

If the user denies permission, jump to the step labeled

Permission Failure below. If the user never

responds, this algorithm stalls on this step.

If the user grants permission but a hardware error such as

an OS/program/webpage lock prevents access, reject

p with a new DOMException

object whose name attribute has the value

NotReadableError and abort these steps.

If the user grants permission but device access fails for

any reason other than those listed above, reject p

with a new DOMException object whose

name attribute has the value

AbortError and abort these steps.

-

Let stream be the

MediaStream object for which the user

granted permission.

-

Run the ApplyConstraints() algorithm on all

tracks in stream with the appropriate

constraints.

-

Resolve p with stream and abort

these steps.

-

Permission Failure: Reject p with a

new DOMException object whose

name attribute has the value

SecurityError.

-

Return p.

In the algorithm above, constraints are checked twice - once at

device selection, and once after access approval. Time may have passed

between those checks, so it is conceivable that the selected device is

no longer suitable. In this case, a NotReadableError will result.

MediaStreamConstraints

The MediaStreamConstraints dictionary is used to instruct

the User Agent what sort of MediaStreamTracks to include in the

MediaStream returned by getUserMedia().

- (boolean or MediaTrackConstraints) video = false

-

If true, it requests that the returned

MediaStream contain a video track. If a Constraints

structure is provided, it further specifies the nature and settings

of the video Track. If false, the MediaStream

MUST NOT contain a video Track.

- (boolean or MediaTrackConstraints) audio = false

-

If true, it requests that the returned

MediaStream contain an audio track. If a Constraints

structure is provided, it further specifies the nature and settings

of the audio Track. If false, the MediaStream

MUST NOT contain an audio Track.

NavigatorUserMediaSuccessCallback

- MediaStream stream

-

MediaStream object representing the stream to

which the user granted permission as described in the

navigator.getUserMedia() algorithm.

Implementation Suggestions

Resource

reservation